Tech

Building Vision for Computers with The Nano AI

Artificial Intelligence is a fascinating subject – and one that’s getting hotter and hotter as time goes on. There are many types of AI, which all work in different ways, but the one we’re looking at today is “Nano AI.” Nano AI is able to create insanely complex vision for computers and the potential implications of this technology are really incredible!

We often take for granted the incredible feat that is our vision. We can easily see a vast array of colors, shapes, and sizes all around us. Our brains process this information quickly and efficiently, but what if we could do even better? What if we could build vision for computers that was just as good as our own? This may sound like science fiction, but it’s actually possible with the help of nano AI. Nano AI is able to create incredible vision for computers and the potential implications of this technology.

Nano AI: A new form of AI

The Nano AI is a new type of artificial intelligence that is being developed by a team of engineers at the University of Southern California. This new form of AI is designed to be much more efficient and effective than current AI technology. The Nano AI is based on the use of nanotechnology, which allows for the creation of very small devices that can perform complex tasks. The team behind the Nano AI believes that this new technology could be used to create computers that can see and understand the world around them, just like humans do.

One potential application of the Nano AI is in the development of self-driving cars. Current self-driving car technology relies on cameras and sensors to detect objects and navigate roads. However, these systems can be fooled by things like bad weather or road construction. The Nano AI could potentially allow self-driving cars to “see” better, making them much safer.

Another potential application for the Nano AI is in medical diagnosis. Currently, doctors rely on human experts to diagnose diseases. However, there are many cases where human experts make mistakes. The Nano AI could be utilized to create diagnostic tools that are much more accurate than current methods.

The team behind the Nano AI is currently working on building a prototype of their system. They hope to have a working system within the next few years.

Neural Chip for AI

A neural chip is a microchip that imitates the workings of a human brain. Neural chips are being developed to help computers process information more effectively, as well as to provide them with artificial intelligence (AI).

One of the advantages of using neural chips is that they can parallel processing, meaning they can perform multiple tasks simultaneously. This is in contrast to conventional computer chips, which can only carry out one task at a time.

Neural chips are also much more energy efficient than traditional computer chips. This is because they operate more like the human brain, which uses far less energy than even the most efficient computers.

One company that is working on developing neural chips is IBM. In 2016, IBM announced that it had created a prototype chip called TrueNorth. This chip was designed to be scalable and efficient, two essential qualities for any AI platform.

Before neural chips are ready for widespread use, and there is still some way to go, but it is clear that they have great potential. In the future, neural chips could help make our devices smarter and more efficient while also reducing our reliance on fossil fuels.

GPU for Machine Learning

GPUs are ideal for machine learning because they can handle the large amounts of data that are required for training machine learning models. GPUs can speed up the inference process, making it possible to get results from machine-learning models in real-time.

A main benefit of employing a GPU for machine learning is that it can significantly reduce the training time for machine learning models. For example, training a deep neural network on a GPU can take just a few days, whereas training the same model on a CPU can take weeks or even months.

Another benefit of using GPUs for machine learning is that they offer significant speedups regarding inferencing. The inference is the process of applying a trained machine learning model to new data to make predictions. This is often done in real-time, which means that speed is critical. GPUs can offer inferencing speedups of up to 100x compared to CPUs, making them ideal for applications with fast results.

Neural Network Processing

Neural networks are a type of artificial intelligence that are used to process data in a similar way to the human brain. Neural networks can be used for a variety of tasks, including pattern recognition, image classification, and prediction.

The Nano AI is a neural network processing chip designed to be used in various applications, including drones, robots, and security cameras. The Nano AI can learn and recognize patterns, making it an ideal choice for these types of applications.

The Nano AI is a powerful tool that can help computers become better at vision. With the proper training, the Nano AI can help computers identify objects and people with greater accuracy. This technology has the potential to revolutionize the way computers process information and could have a massive impact on businesses and individuals alike.

Software

Smart City Communications: The Network Infrastructure Behind Smarter, Safer Urban Environments

Smart cities are no longer a vision — they are an active deployment reality for municipalities, utility operators, and government agencies worldwide. But the promise of smarter traffic management, more efficient public services, lower energy consumption, and improved emergency response depends entirely on one foundational capability: reliable, scalable smart city communications infrastructure that connects thousands of sensors, cameras, and edge devices back to the platforms that analyze and act on their data.

This article examines the communications architecture that underlies smart city deployments, the specific connectivity challenges municipalities face, and how layered IoT and Ethernet networking solutions are enabling cities to move from isolated pilot programs to city-wide operational networks.

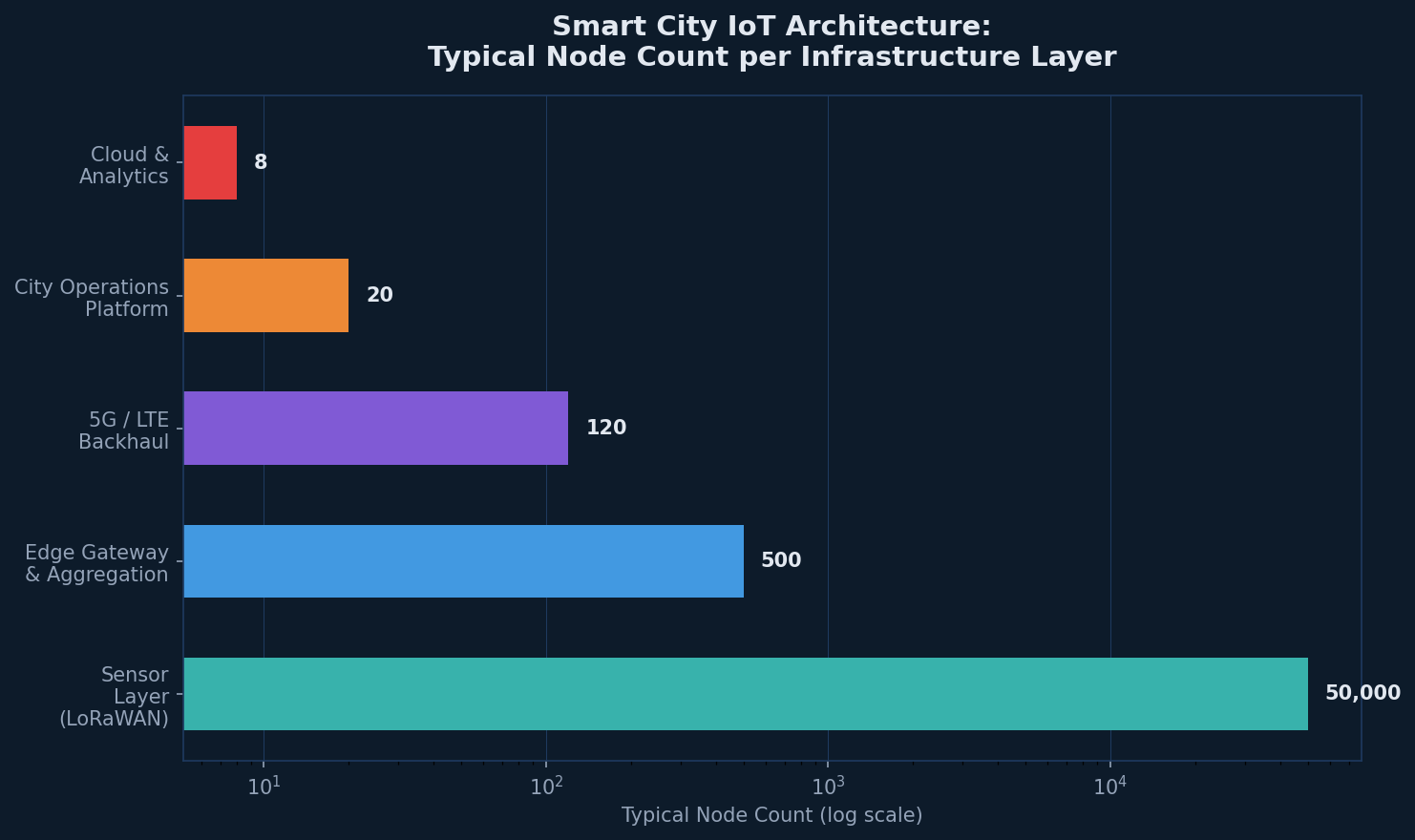

The Smart City Communications Stack: A Layered Architecture

Effective smart city communications are not built on a single technology — they are built on a hierarchy of complementary connectivity layers, each optimized for a different class of device and use case:

- Sensor and device layer: Battery-operated environmental sensors, parking monitors, flood sensors, and utility meters communicate over LoRaWAN — a low-power, long-range protocol designed for small-payload IoT data across wide areas.

- Edge gateway and aggregation layer: LoRaWAN gateways and cellular IoT devices aggregate field data and forward it over higher-bandwidth backhaul to city network infrastructure.

- Access and backhaul layer: 5G, LTE, and Ethernet circuits carry aggregated IoT data, CCTV streams, and traffic management traffic from distributed edge points to city operations centers.

- Operations platform layer: City management platforms ingest, correlate, and act on data from hundreds of thousands of endpoints — generating alerts, automating responses, and providing dashboards for city operators.

The network infrastructure solutions required to support this stack must span diverse connectivity technologies, operate reliably in outdoor urban environments, and scale from pilot deployments to city-wide networks without architectural redesign.

LoRaWAN: The Connectivity Backbone for Smart City IoT Sensors

For the sensor layer — the thousands or tens of thousands of low-power devices that populate a smart city deployment — LoRaWAN has emerged as the dominant connectivity protocol. Its key characteristics make it uniquely suited to municipal IoT deployments:

- Range up to 10-15km in urban environments with line-of-sight conditions

- Multi-year battery life for sensor devices operating on small batteries or energy harvesting

- Unlicensed spectrum operation eliminating the need for cellular carrier agreements

- Scalable to millions of devices per network with appropriate gateway density

RAD’s SecFlow-1p and ETX-1p devices integrate LoRaWAN gateway functionality with business-class IP routing in a single ruggedized device — enabling cities to deploy LoRaWAN sensor connectivity and IP network infrastructure from a single platform. This integration reduces both deployment cost and operational complexity compared to architectures that require separate LoRaWAN and IP edge devices.

Remote IoT Data Monitoring: Turning Sensor Data into Operational Intelligence

Collecting sensor data is only the first step. The operational value of smart city infrastructure is realized through remote IoT data monitoring — the continuous analysis of sensor streams to detect events, identify trends, and trigger automated responses. For municipalities, this capability enables:

- Flood and environmental monitoring: River level sensors and rain gauges trigger early warning alerts hours before flood events reach urban areas.

- Smart street lighting: Occupancy sensors and light level monitors enable adaptive street lighting that reduces energy consumption by 30-60% compared to fixed schedules.

- Asset tracking and infrastructure monitoring: Vibration and tilt sensors on bridges, tunnels, and public infrastructure provide continuous structural health monitoring.

- Water utility management: Flow meters and pressure sensors detect leaks in real time, reducing non-revenue water losses and enabling proactive maintenance.

| Smart City Application | Connectivity Technology | RAD Device |

| Flood / Weather Sensors | LoRaWAN | SecFlow-1p / ETX-1p |

| Smart Street Lighting | LoRaWAN + Ethernet | SecFlow-1p |

| CCTV & Surveillance | Ethernet / 5G | ETX-2i series |

| Traffic Management | Ethernet + LTE | SecFlow-1v |

| Water Utility Meters | LoRaWAN | ETX-1p (LoRaWAN GW) |

First Responder and Public Safety Communications in Smart City Networks

Smart city communications infrastructure increasingly serves as the backbone for public safety and first responder networks. Police body cameras, emergency dispatch systems, and incident command communications all flow over the same urban network infrastructure that carries parking sensors and smart lighting — making the reliability and security of that infrastructure a public safety matter.

RAD’s SecFlow-1v — recognized with an IoT Security Excellence award — provides the integrated cybersecurity capabilities required when smart city networks carry safety-critical traffic. Its firewall, VPN, and access control features ensure that smart city IoT traffic is isolated from public safety communications, preventing interference and protecting against cyber threats.

Scaling Smart City Networks: From Pilot to City-Wide Deployment

Many smart city programs struggle with the transition from successful pilots to full-scale municipal deployments. The technical and operational challenges that are manageable at 50 devices become critical at 50,000. Key factors that determine scalability include:

- Zero-touch device provisioning: Manually configuring thousands of edge devices is operationally impossible; ZTP is essential for city-scale rollout.

- Centralized remote management: A unified NOC platform that manages all edge devices — regardless of connectivity type — is necessary for city-scale operations.

- Modular network architecture: Designs that allow new use cases and device types to be added without redesigning the underlying network infrastructure.

According to McKinsey’s Global Smart City Report, cities that invest in scalable, platform-based IoT infrastructure recover their technology investment significantly faster than those that deploy fragmented, use-case-specific systems — underlining the importance of architecture decisions made at the outset of smart city programs.

RAD’s Smart City Communications Portfolio

RAD’s approach to smart city IoT communications combines LoRaWAN gateway integration, ruggedized Ethernet access, and IoT security capabilities into a cohesive product portfolio purpose-built for municipal deployments. RAD devices are certified for outdoor and harsh environments, support remote management via standard network management protocols, and integrate with major IoT platform vendors through standard APIs.

With RAD as a network infrastructure partner, municipalities gain both the edge connectivity hardware and the integration expertise to build smart city networks that scale from initial deployment through full city-wide operation. For current RAD smart city deployment perspectives and technical articles, Tech PR Online regularly features RAD’s urban connectivity innovations.

Conclusion

Smart city communications are not a single technology — they are a carefully engineered ecosystem of complementary connectivity layers, purpose-built edge devices, and integrated management platforms. Cities that invest in the right foundational network infrastructure today — scalable, secure, and multi-technology — are building the platform for a generation of urban innovation. Those that treat connectivity as an afterthought risk finding their smart city ambitions constrained by the infrastructure choices made at the start.

Saas

5G Use Cases in 2025: How Network Infrastructure Is Evolving to Meet New Demands

The global 5G rollout has moved well past the early-adopter phase. In 2025, mobile operators, enterprises, and critical infrastructure providers are actively deploying 5G networks — and the range of 5G use cases enabled by this technology continues to expand. From enhanced mobile broadband to mission-critical machine communications, 5G is fundamentally reshaping what is possible at the network edge.

Yet the success of 5G deployments depends heavily on underlying transport infrastructure. Cell site connectivity — fronthaul, midhaul, and backhaul — must be engineered to handle the strict latency, synchronization, and bandwidth requirements that 5G imposes. This article explores the most important 5G use cases driving network evolution in 2025 and the transport infrastructure innovations enabling them.

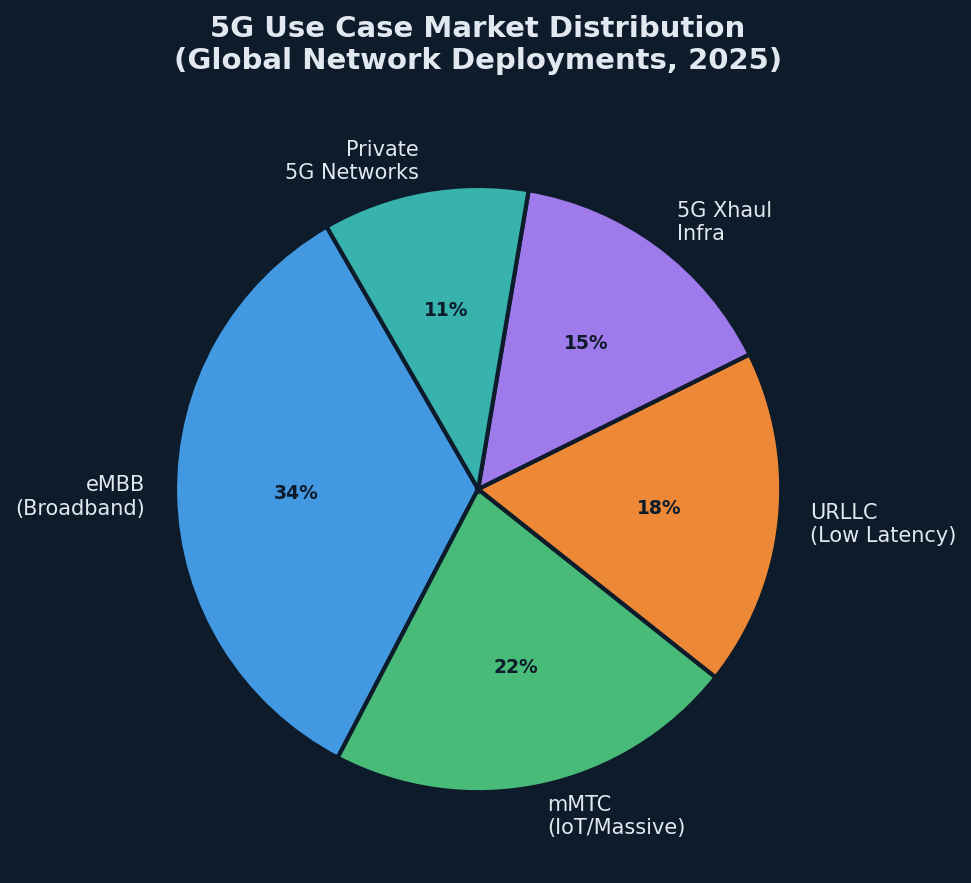

Understanding the 5G Use Case Landscape

The 3GPP standards body defines three primary 5G service categories, each demanding different network characteristics:

- eMBB (Enhanced Mobile Broadband): High-bandwidth applications including 4K/8K video, augmented reality, and fixed wireless access. Demands high throughput but tolerates moderate latency.

- mMTC (Massive Machine-Type Communications): Large-scale IoT deployments — smart city sensors, utility meters, logistics tracking. Requires broad coverage and energy efficiency over raw speed.

- URLLC (Ultra-Reliable Low-Latency Communications): Mission-critical applications including autonomous vehicles, industrial automation, and remote surgery. Demands sub-millisecond latency and extremely high reliability.

Each category places distinct requirements on network transport — and the infrastructure choices made at the cell site determine whether these SLAs can actually be met.

5G Xhaul: The Transport Architecture Enabling Every Use Case

5G xhaul is the collective term for the fronthaul, midhaul, and backhaul transport segments that connect 5G radio units (RUs), distributed units (DUs), and centralized units (CUs) to the core network. As 5G architectures disaggregate radio functions, xhaul transport becomes more complex — and more consequential.

Fronthaul — connecting RU to DU — carries raw radio samples and demands the strictest timing: sub-100 nanosecond synchronization accuracy aligned with IEEE 1588 Precision Time Protocol (PTP). Midhaul connects DU to CU, typically requiring microsecond-level latency. Backhaul, connecting CU to the core, carries aggregated user traffic and must support high bandwidth with deterministic behavior.

RAD’s all-in-one 5G xhaul cell site gateway simplifies this architecture by integrating fronthaul, midhaul, and backhaul transport into a single, compact device. This consolidation reduces cell site footprint, simplifies operations, and provides a unified point of management for all xhaul transport segments — a significant advantage for operators managing thousands of 5G sites.

Top 5G Use Cases Reshaping Networks in 2025

| 5G Use Case | Key Network Requirement | Primary Sector |

| 5G Fronthaul/Midhaul | Sub-100ns sync, low latency | Telecoms / CSP |

| Private 5G Networks | Network slicing, isolation | Industry / Manufacturing |

| Smart City IoT | mMTC, LoRaWAN integration | Government / Municipal |

| Fixed Wireless Access | High throughput eMBB | Residential / Enterprise |

| Critical Infrastructure | URLLC, high availability | Utilities / Transport |

Private 5G Networks: The Enterprise 5G Use Case Gaining Momentum

Private 5G networks — where enterprises deploy their own licensed or shared spectrum 5G infrastructure on-premises — are among the fastest-growing segments of the 5G use case landscape. Manufacturing plants, logistics hubs, ports, and mining operations are deploying private 5G to enable mobile automation, real-time quality inspection, and autonomous vehicle coordination.

The appeal is clear: private 5G offers the coverage, latency, and reliability of 5G with the security and control of a private network — without depending on shared public 5G capacity. For operators of critical assets, this control is invaluable.

RAD’s 5G cell site gateway solutions are designed to support both public and private 5G deployments, providing the synchronization accuracy and transport flexibility required for disaggregated RAN architectures used in private 5G environments.

5G and Smart City Communications: Connecting Urban Infrastructure

Smart city applications represent one of the most visible and socially impactful 5G use cases in deployment today. Traffic management systems, environmental monitoring networks, connected streetlights, and public safety communications are all candidates for 5G-connected infrastructure.

The convergence of 5G with LoRaWAN — which handles low-power, long-range sensor connectivity — creates a layered urban connectivity architecture. 5G handles bandwidth-intensive and latency-sensitive applications, while LoRaWAN aggregates data from battery-powered sensors across the city. RAD’s ETX-1p combines business routing with LoRaWAN gateway functionality, making it a practical building block for smart city deployments that span both connectivity layers.

Network Synchronization: The Hidden Enabler of 5G Use Cases

Beneath every 5G use case lies a synchronization requirement that is often underestimated until it causes problems. Fronthaul timing accuracy, inter-site coordination for interference management, and network slicing all depend on a timing fabric that extends from the core to every cell site.

IEEE 1588v2 Precision Time Protocol (PTP) and SyncE are the standards-based mechanisms used to distribute timing across 5G transport networks. RAD’s solutions support both, with hardware timestamping accuracy that meets the strictest 5G fronthaul timing requirements. This capability is not optional for URLLC or massive MIMO deployments — it is fundamental.

RAD’s 5G Transport Portfolio: Built for Every Xhaul Segment

RAD has positioned its network edge portfolio to address the full range of 5G transport requirements — from cell site gateway consolidation to Ethernet demarcation for 5G business services. The company’s all-in-one 5G xhaul solution provides a cost-effective approach to multi-segment transport, while the ETX-2i series delivers MEF-certified demarcation for 5G-delivered enterprise services.

With deep expertise in timing, synchronization, and carrier-grade Ethernet — and a global deployment footprint spanning 150+ countries — RAD brings both the technology and the operational experience to help carriers execute successful 5G infrastructure builds at scale.

Conclusion

The 5G use case landscape in 2025 is broad, diverse, and accelerating. From smart cities and private industrial networks to mission-critical URLLC applications, the value of 5G depends entirely on the quality of the transport infrastructure beneath it. Network operators who invest in purpose-built xhaul solutions today are laying the foundation for a decade of 5G service innovation — and the competitive advantages that come with it.

Software

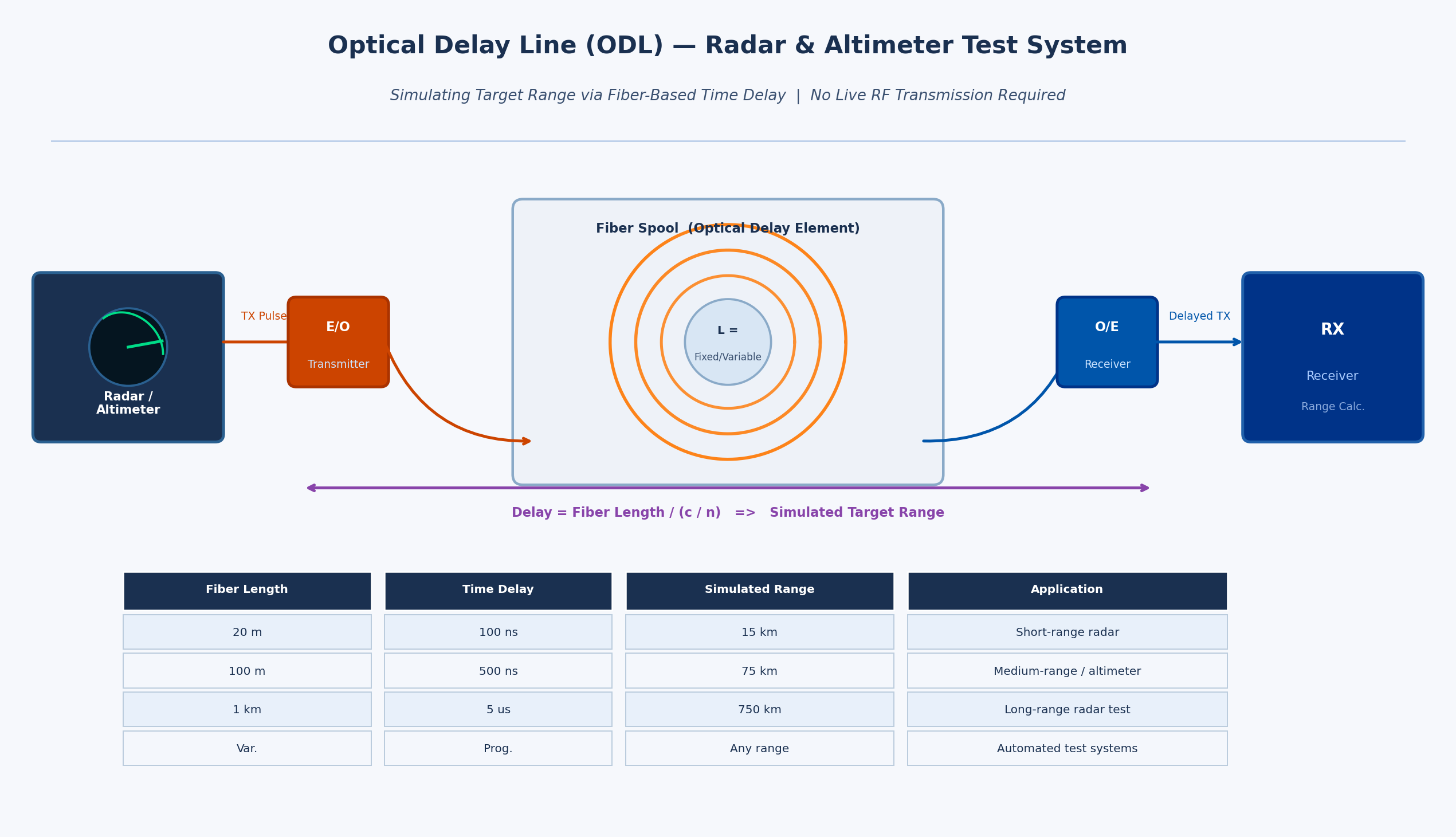

Optical Delay Lines: The Precision Solution Reshaping Radar and Altimeter Testing

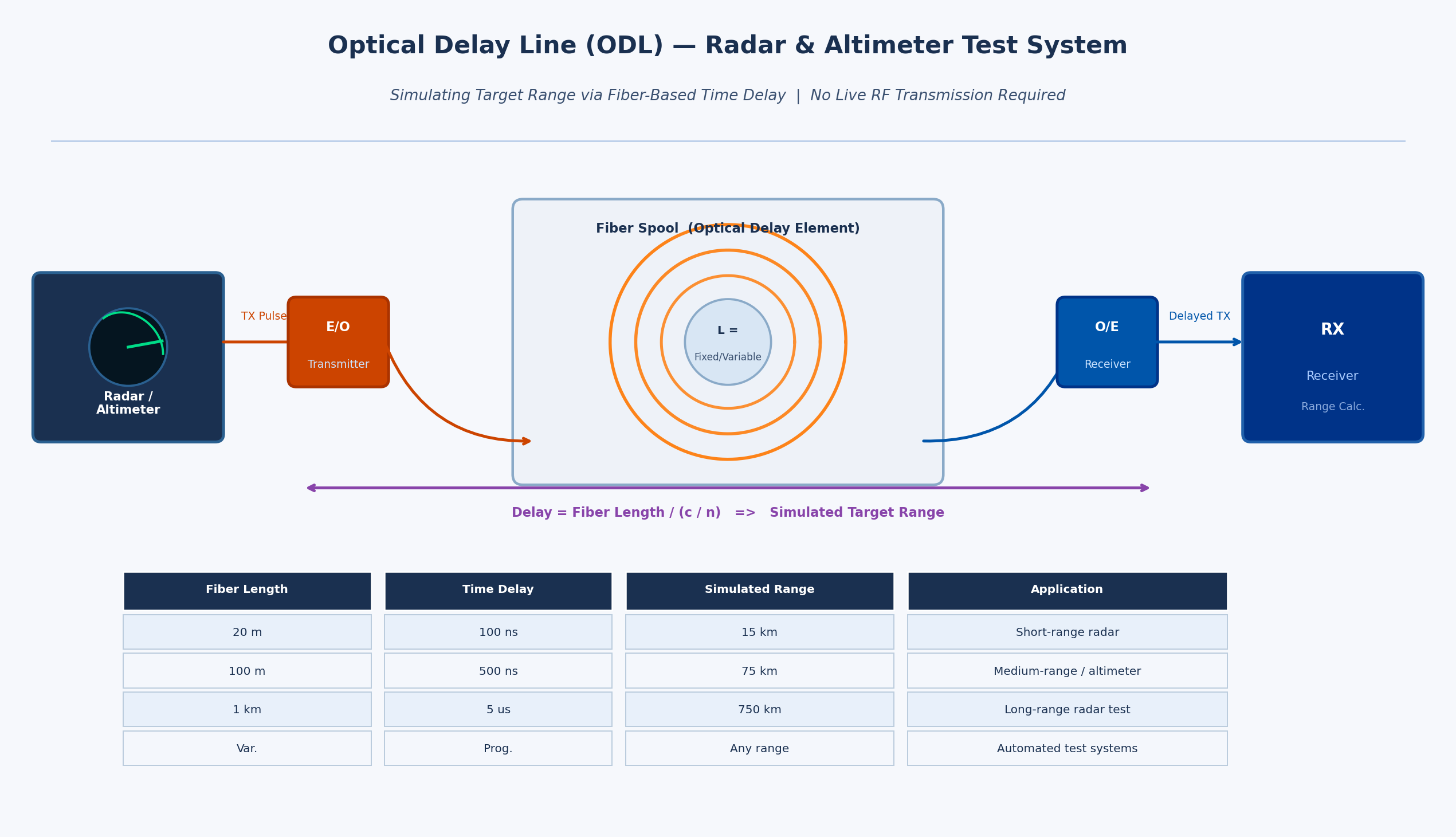

Radar and altimeter systems must be rigorously tested and calibrated before deployment — but transmitting live RF energy to simulate target returns is impractical, hazardous, and often impossible in a laboratory or depot environment. This article explains how optical delay lines (ODLs) solve this fundamental challenge, how they work, why fiber-based delay lines outperform electronic alternatives, and how RFOptic’s specialized ODL solutions support radar and altimeter testing programs across defense and aviation markets.

Radar and altimeter testing is one of the most technically demanding areas in defense electronics validation. Systems must be verified to perform accurately across a range of simulated target distances, velocities, and environments — yet doing so by physically placing reflecting targets at the required distances is seldom feasible. The solution lies in optical delay lines, a technology that uses the fixed propagation speed of light in optical fiber to introduce precisely controlled time delays into an RF signal, simulating the time-of-flight of a radar return at a specified range.

The Testing Problem: Why You Cannot Simply Transmit to a Real Target

A radar system determines the range of a target by measuring the round-trip time of a transmitted pulse. An altimeter determines altitude by measuring the time for the transmitted signal to reflect off the ground and return. In both cases, the fundamental measurement is time-of-flight — and testing this measurement requires introducing a known, accurate delay between the transmitted signal and the simulated return.

In field testing, this can be done by physically placing a reference reflector at a known distance. But field testing is expensive, weather-dependent, logistically complex, and often impossible for airborne altimeters (which would require flight testing to validate each range point) or for classified radar systems that cannot be operated in environments where frequency emissions are monitored or regulated. Depot-level maintenance and factory acceptance testing require a bench solution.

Electronic delay lines — switched networks of lumped inductors and capacitors, or surface acoustic wave (SAW) devices — have historically been used for this purpose. But they carry significant limitations: limited frequency range, high insertion loss, temperature-dependent performance, and the inability to cover the multi-microsecond delays needed to simulate distant targets without cascading multiple stages and accumulating noise and distortion.

How an Optical Delay Line Works

An optical delay line converts the RF signal to be delayed into an optical signal using an electro-optic modulator or laser diode, routes that optical signal through a calibrated length of single-mode optical fiber, then reconverts it back to an RF signal at the output using a photodetector. Since light travels through fiber at approximately 2×10⁸ meters per second (about two-thirds of the speed of light in vacuum), a specific fiber length produces a very precise and stable delay.

For example, approximately 100 meters of fiber produces a delay of around 500 nanoseconds — equivalent to a radar range of approximately 75 kilometers in a monostatic radar configuration. Variable delay lengths can be achieved through switched fiber spools, allowing test equipment to simulate targets at multiple programmable ranges without moving any physical hardware.

The key performance advantages of fiber-based delay lines compared to electronic alternatives are:

- Extremely low loss: optical fiber introduces negligible signal loss per unit length compared to coaxial cable or electronic delay elements at microwave frequencies.

- Frequency independence: the delay is determined purely by the fiber length, not the frequency of the signal. The same ODL works equally well at 1 GHz and at 40 GHz, making it suitable for multi-band radar and wideband altimeter testing.

- Excellent phase stability: fiber delay is not affected by electromagnetic interference and shows very low thermal drift compared to electronic delay networks.

- Scalability: very long delays (microseconds to tens of microseconds) equivalent to hundreds or thousands of kilometers of range — are achievable simply by using more fiber, without cascading lossy electronic stages.

- Electrical isolation: optical fiber passes no DC current and provides complete galvanic isolation between the input and output RF ports, eliminating common-ground interference paths in complex test setups.

Variable and Programmable Optical Delay Lines

The most operationally useful ODL systems offer variable or programmable delay — the ability to switch between multiple discrete delay values to simulate different target ranges. This is achieved through optical switching networks that connect the RF signal to different fiber spools of different lengths, or through continuous variable delay mechanisms using motorized fiber stretchers or optical path length adjustment.

Programmable delay lines are essential for acceptance testing of radar systems that must perform across the full specified range envelope. Rather than resetting physical hardware for each range point, the test engineer selects the desired delay from the ODL’s control interface, and the system switches to the appropriate fiber path within milliseconds. For automated production test environments, this enables rapid, software-controlled multi-point range calibration.

According to the IEEE Transactions on Microwave Theory and Techniques, optical delay line technology has advanced considerably with the integration of programmable switching and temperature compensation, making modern ODL systems suitable for demanding calibration environments where measurement uncertainty must be minimized.

Altimeter Testing: A Specialized Requirement

Radio altimeters — used in commercial aviation, military aircraft, and UAVs to measure height above terrain — are safety-critical systems with stringent testing requirements. Regulatory bodies including the FAA and EASA require verification of altimeter accuracy across the full operating altitude range, typically from near-zero to several thousand feet. Testing each altitude point requires introducing the corresponding time delay between the transmitted altimeter signal and the simulated ground return.

Modern radar altimeters typically operate in the 4.2–4.4 GHz frequency band, though next-generation systems and those for unmanned platforms span wider ranges. Key testing parameters include:

- Absolute accuracy: the altimeter must measure altitude to within a defined tolerance across the full range.

- Response time: the altimeter must update its reading within a specified latency when altitude changes rapidly — important for terrain-following and automatic landing systems.

- Interference immunity: with 5G networks now deployed in the 3.7–4.2 GHz C-band in many countries, regulatory concerns about altimeter interference have made test coverage of adjacent-band interference scenarios a new requirement.

An optical delay line test system for altimeter applications must cover the altimeter’s full altitude range (typically equivalent to delays from a few to several hundred nanoseconds), handle the altimeter’s specific frequency band, and provide calibrated, repeatable delay values. For aircraft integration testing, the system must also operate reliably in the electromagnetic environment of an avionics test bench.

RFOptic’s Optical Delay Line Solutions

RFOptic offers customized low and high frequency optical delay line solutions for testing and calibrating radar and altimeter systems. The company’s ODL product line is described as one of its core competencies, offering both standard and application-specific configurations.

RFOptic provides both fixed and programmable delay configurations, with the following key characteristics as described on their platform:

- Coverage from low frequency through high-frequency microwave and mmWave bands, supporting both current-generation radar and altimeter systems and next-generation wideband applications.

- Customized ODL systems developed to customer specifications, including integration with specific test equipment interfaces and control software.

- Online request-for-quote tool for customized ODL and altimeter ODL systems, supporting design consultation from the earliest project stage.

- Subsystem integration: RFOptic’s ODLs can be integrated into complete radar and altimeter test subsystems, combining the delay function with signal conditioning, switching, and management interfaces.

RFOptic’s value proposition emphasizes that in the pre-sales stage, the company builds solutions tailored to customer needs, including simulations that predict link behavior — particularly important for ODL systems where target delay accuracy and dynamic range must be verified analytically before hardware is built.

Emerging Applications: UAV Altimeters and Radar Testing

The rapid growth of unmanned aerial systems (UAS/UAV) has created a new generation of altimeter testing requirements. Drone altimeters are smaller, lighter, and often operate in different frequency bands than traditional aviation altimeters. They must be validated for low-altitude terrain-following, precision landing approaches, and operation in spectrum-contested environments. The same fundamental principle applies: fiber-based optical delay lines provide the most accurate and flexible platform for simulating the required altitude ranges in a laboratory setting.

For those evaluating radar testing solutions, the combination of programmable delay ranges, wide frequency coverage, and low noise floor that optical delay lines provide makes them the reference tool of choice across military radar, commercial aviation, and UAV development programs.

Conclusion

Optical delay lines represent a technically elegant solution to one of the oldest problems in radar and altimeter development: how to test time-of-flight accuracy without deploying hardware into the field. By leveraging the fixed and stable propagation speed of light in optical fiber, ODL systems deliver highly accurate, repeatable, and frequency-independent delay values that electronic alternatives cannot match at microwave and mmWave frequencies.

For radar system developers, avionics test labs, and depot maintenance facilities, investing in optical delay line test equipment — particularly programmable systems capable of simulating multiple range points — is a practical step that reduces test time, improves calibration accuracy, and future-proofs the test infrastructure for next-generation wideband radar and altimeter systems.

-

Business Solutions2 years ago

Business Solutions2 years agoLive Video Broadcasting with Bonded Transmission Technology

-

Business Solutions11 months ago

Business Solutions11 months agoThe Future of Healthcare SMS and RCS Messaging

-

Business Solutions2 years ago

Business Solutions2 years ago2-Way Texting Solutions from Company Message Services

-

Business Solutions2 years ago

Business Solutions2 years agoCommunication with Analog to Fiber Converters & RF Link Budgets

-

DSRC Communication1 year ago

DSRC Communication1 year agoThe Crossroads of Connectivity: DSRC vs. C-V2X Technologies in Automotive Communication

-

Electronics2 years ago

AI Modules and Smart Home Chips: Future of Home Automation

-

Tech3 years ago

Tech3 years agoThe Symphony of Connectivity: Understanding Ethernet Devices

-

Business Solutions2 years ago

Business Solutions2 years agoWholesale SMS Platforms with OTP Services