Tech

Networking in IoT

In an industrial internet of things system, IoT devices need to communicate with each other, on top of being connected to an IoT gateway. By definition, industrial internet of things refers to interconnected sensors, instruments and other devices networked together with computers’ industrial applications.

Why You Need Decent Networking Platforms

Data and Analytics

The Internet of things is basically non-existent without a network to support it. The sensors initiate a massive influx of large amounts of data that needs to be processed in real-time to deduce insights that are of some value. This influx of data has a huge impact on the capabilities of the networking systems in use today.

If the network is unable to keep up, it impedes the data processing and analysis. When you think about scaling up, your current industrial IoT solution for networking will certainly struggle in keeping up with IoT demands in the future.

Computing

IoT setups need to process events and data with minimal latency. This is crucial in delivering useful data to make decisions in real time.

Normally, IoT devices are optimized for power and cost – which limits the computing power available in these devices. This can be remedied by implementing an IoT gateway. On top of this, you can also leverage a network that can support an application hosting environment which allows IoT vendors to host their software locally.

The capacity of the network also decides how the computations on the network elements can be leveraged to run processes like managing traffic.

Connectivity

From a basic approach, IoT is essentially a network of interconnected devices that communicate with each other. To facilitate this, they need committed connectivity to controllers that manage the whole device ecosystem. The connectivity in this case can be either wireless or wired.

The network infrastructure should be able to support the protocols that make the most sense in the IoT setting you are in.

Security

A lot goes into a basic IoT setting, from operational technology to information technology. They all need to be meshed together – which opens up doors to potential attacks from hackers. This makes security a crucial consideration.

It goes without mention that the network needs to evolve to protect and secure the system from attacks that originate from its own infected IoT devices.

Benefits of Networking Platforms

These industrial IoT solutions usually come with different capabilities – some are more advanced than others. What organizations are looking for is to gain better visibility of all connected devices even with the continued increase in the connected devices.

On top of gaining visibility, there is also the need to control the device’s access to the network and apply security to IoT devices that don’t come with embedded security features. There also needs to be regular monitoring of IoT devices on the networking platform so anomalies can be detected fast and rectified even faster and efficiently.

Choosing one of the more complex industrial IoT solutions for networking will give you some extra benefits like these:

Automated IoT Behavioral Monitoring

Advanced network solutions employ machine learning algorithms to learn the expected behavior of network endpoints. On top of basic monitoring, they can automatically trigger alerts and respond accordingly when an endpoint acts unusually.

It uses an unsupervised approach, as in machine learning, to enforce IoT security delivering threat detection and mitigation without human input.

Consistent Policy-Based Security

Complex network solutions offer the ability to easily apply whitelist profiles to IoT devices to limit communication to only what is authorized.

Controlled network access is also provided to ensure that authorized IoT devices are on-board as quickly and efficiently as possible while compromised devices are quickly identified and quarantined from the network.

Tech

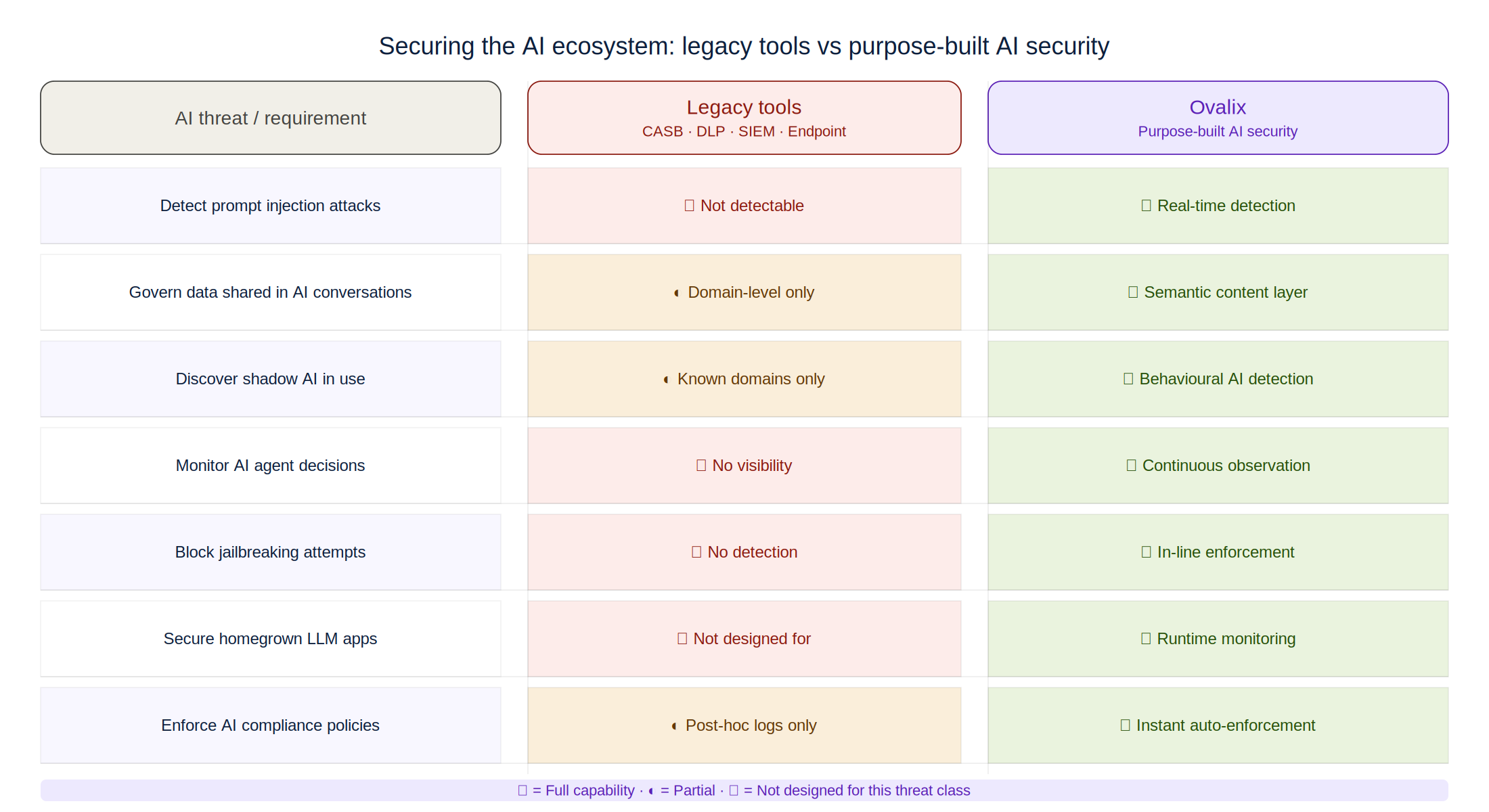

Secure the AI Ecosystem: Purpose-Built AI Security vs Legacy Tools

At a Glance

- The race to secure the AI ecosystem has exposed a fundamental mismatch: the tools enterprises rely on for cybersecurity were designed for a world before generative AI, agentic workflows, and large language models existed at enterprise scale.

- Legacy tools – CASB, DLP, SIEM, and endpoint security – can block AI tool access or flag data movement, but they cannot inspect AI interactions, detect prompt injection, or govern the autonomous decisions of AI agents.

- Purpose-built AI security platforms like Ovalix are designed from the ground up for AI-specific threat vectors, providing the visibility, governance, and runtime protection that legacy stacks cannot deliver.

In 2025, most enterprise security teams found themselves in an uncomfortable position: AI adoption had outpaced their ability to secure it. Employees were using dozens of public AI tools, development teams were deploying homegrown AI applications, and autonomous AI agents were being given access to sensitive systems – all under security architectures never designed to handle any of it. The question facing CISOs is not whether to secure the AI ecosystem. It is which type of solution is actually capable of doing so.

What Legacy Tools Were Built For

What Legacy Tools Were Built For

Cloud Access Security Brokers (CASBs) were designed to govern SaaS application access – applying policy to which apps employees could use and what data they could move to them. Data Loss Prevention (DLP) tools were built to identify and block the transfer of sensitive data based on content patterns. SIEM platforms were designed to aggregate and correlate security events from known infrastructure. Endpoint security monitored and protected the device layer.

Each of these tools was built for an era of predictable application behaviour, defined data flows, and static threat signatures. None of them anticipated a world in which employees would have natural-language conversations with external AI models, development teams would deploy applications whose behaviour is fundamentally probabilistic rather than deterministic, or automated agents would take actions across systems with minimal human oversight.

Applied to AI, these tools face a capability gap that is architectural, not configurational. A CASB can block access to ChatGPT or Claude. It cannot inspect what prompt was sent, whether sensitive data was included, or whether the AI’s response contained harmful or hallucinated content. A DLP system can flag when a document is uploaded to an AI service. It cannot identify when an employee describes proprietary information conversationally across twenty exchanges.

The AI-Specific Threat Landscape Legacy Tools Miss

Securing the AI ecosystem requires addressing threats that did not exist before generative AI. Prompt injection attacks – where malicious instructions embedded in input data manipulate an AI model’s behaviour – are undetectable by signature-based security tools because the attack happens within a natural language conversation, not through malware or a network exploit. Jailbreaking techniques that circumvent an AI model’s safety constraints produce no network-layer indicators that a SIEM would recognise.

Agentic AI security presents an even sharper contrast. AI agents – autonomous systems that can browse the web, write and execute code, access APIs, send messages, and make decisions across interconnected tools – represent a fundamentally new threat surface. An AI agent with excessive permissions, manipulated through a prompt injection attack embedded in a webpage it visits, can exfiltrate data, modify files, or trigger actions across enterprise systems with no human review step. No legacy security tool was designed to monitor, govern, or intervene in this kind of autonomous decision-making chain.

Ovalix’s AI agents security capability addresses this directly: continuous observation of every agent communication and decision, automatic enforcement of organisational rules within agentic workflows, and real-time blocking of actions that exceed permitted scope or violate data governance policies. This is not a configuration of an existing security tool – it is a purpose-built capability for a purpose-built threat.

Where Purpose-Built AI Security Outperforms Legacy Approaches

The practical differences between legacy tools and purpose-built AI ecosystem security platforms become clear across four dimensions. First, visibility: Ovalix provides deep visibility into AI interactions — not just access logs but the content, context, and risk profile of every exchange between users, applications, and AI models. Legacy tools provide network or file transfer visibility that misses the semantic layer where AI risks actually live.

Second, threat detection: Ovalix continuously monitors for AI-specific attacks including prompt injection, jailbreaking attempts, and model manipulation – threats that have no signature in legacy security databases because they are behaviours, not payloads. Third, data protection: Ovalix enforces data governance at the interaction layer – applying redaction and blocking within AI conversations, not just at file transfer boundaries. Fourth, agentic AI security: Ovalix governs autonomous agent behaviour in real time, enforcing compliance and preventing scope creep that legacy monitoring tools observe only after the fact, if at all.

The question for security teams is not whether legacy tools should be replaced – they remain essential for the threats they were designed for. The question is whether they can be extended to cover AI risk. For most enterprises, the answer is no. AI-specific threats require AI-specific defences.

For organisations serious about securing the AI ecosystem, the path forward combines existing security infrastructure with a dedicated AI security layer. Ovalix sits within that layer — providing the AI-native visibility, governance, and runtime protection that closes the gap between enterprise AI adoption and enterprise AI security. Explore Ovalix’s approach to securing the full AI ecosystem at ovalix.ai, and discover the specific agentic AI security capabilities at the Ovalix AI Agents product page.

Frequently Asked Questions About Securing the AI Ecosystem

What does it mean to secure the AI ecosystem?

Securing the AI ecosystem means protecting all AI-related activity across the enterprise, including employee use of public AI tools, internally developed AI applications, large language models (LLMs), and autonomous AI agents. It involves visibility, governance, data protection, and runtime security.

Why do organizations need purpose-built AI security?

Traditional cybersecurity tools were designed before generative AI and agentic workflows became widespread. Purpose-built AI security platforms are specifically designed to detect threats such as prompt injection, jailbreak attempts, model manipulation, and overprivileged AI agents.

What are legacy security tools?

Legacy security tools include Cloud Access Security Brokers (CASB), Data Loss Prevention (DLP), Security Information and Event Management (SIEM), and endpoint protection platforms.

Can CASB tools secure AI applications?

CASB solutions can control access to AI applications and monitor cloud usage, but they generally cannot inspect prompts, analyze model responses, or detect AI-specific attacks occurring within natural language interactions.

Can DLP tools protect against AI risks?

DLP tools can detect file uploads and content patterns, but they often miss sensitive information shared conversationally across multiple prompts and responses.

Can SIEM platforms detect prompt injection attacks?

SIEM platforms aggregate logs and correlate events, but prompt injection attacks occur within natural language interactions and typically do not generate recognizable signatures for traditional detection rules.

What is prompt injection?

Prompt injection is an attack in which malicious instructions embedded in input data manipulate an AI model into ignoring its intended rules or revealing sensitive information.

What is AI jailbreaking?

AI jailbreaking refers to techniques that bypass a model’s built-in safety controls and content restrictions, causing it to perform actions or generate responses it was designed to prevent.

What is agentic AI security?

Agentic AI security focuses on governing autonomous AI agents that can access enterprise systems, call APIs, execute workflows, and take actions without constant human approval.

Why are AI agents a unique security risk?

AI agents can make decisions and perform actions across multiple systems. If they are overprivileged or manipulated, they may exfiltrate data, modify records, or trigger unauthorized processes at machine speed.

What is the difference between securing AI tools and securing AI agents?

Securing AI tools focuses on user interactions with models and applications, while securing AI agents involves monitoring and controlling autonomous behavior, permissions, and decision-making.

Tech

Disease Resistance in Commercial Pepper Varieties: Why Tobamovirus Protection Has Become the Industry’s Non-Negotiable Trait

Introduction

No single agronomic factor has greater influence on commercial pepper profitability than disease management – and no single category of disease has created more disruption in recent years than tobamoviruses. Tomato Brown Rugose Fruit Virus (ToBRFV) and its relatives ha ve swept through greenhouse pepper and tomato operations on multiple continents, triggering crop failures, export bans, and multimillion-dollar losses for growers and packers alike. In this environment, disease resistance packaging in commercial seed varieties has shifted from a desirable trait to an absolute prerequisite for market participation. Seed breeders who can deliver durable, broad-spectrum resistance within commercially competitive varieties are positioned to define the next decade of the fresh pepper sector. BreedX develops conventional pepper varieties with disease resistance packages built for the specific pathogen pressures that greenhouse and field growers face in major production regions.

ve swept through greenhouse pepper and tomato operations on multiple continents, triggering crop failures, export bans, and multimillion-dollar losses for growers and packers alike. In this environment, disease resistance packaging in commercial seed varieties has shifted from a desirable trait to an absolute prerequisite for market participation. Seed breeders who can deliver durable, broad-spectrum resistance within commercially competitive varieties are positioned to define the next decade of the fresh pepper sector. BreedX develops conventional pepper varieties with disease resistance packages built for the specific pathogen pressures that greenhouse and field growers face in major production regions.

Understanding the Pathogen Landscape in Commercial Pepper Production

Commercial pepper crops – particularly those grown in high-density greenhouse environments – face a range of economically significant diseases. Each pathogen operates differently and requires different resistance mechanisms in the variety:

| Pathogen | Type | Avg. Crop Loss (unmanaged) | Primary Impact |

| Tobamovirus (ToBRFV & Tm variants) | Virus | 40–100% | Fruit deformation, mosaic, full crop failure |

| Powdery Mildew (Leveillula taurica) | Fungal | 20–40% | Leaf necrosis, reduced photosynthesis, defoliation |

| Phytophthora capsici | Oomycete | 30–80% | Root and crown rot; damping off in warm/wet conditions |

| Botrytis cinerea (Grey Mould) | Fungal | 10–30% | Post-harvest fruit rot; major pack-out losses |

| Pepper Mild Mottle Virus (PMMoV) | Virus | 15–50% | Fruit discoloration, mosaic; major in greenhouse pepper |

Source: European and Mediterranean Plant Protection Organization (EPPO) Disease Data; USDA AMS Crop Report Estimates 2024

The ToBRFV Crisis: A Case Study in Resistance Urgency

Tomato Brown Rugose Fruit Virus emerged as a significant threat to greenhouse pepper and tomato production beginning in the mid-2010s. By 2023, it had been confirmed in over 40 countries across Europe, North America, the Middle East, and Asia. Unlike earlier tobamovirus strains, ToBRFV overcomes the Tm-2² resistance gene that had been standard protection in commercial varieties for decades – rendering existing resistant material vulnerable.

The consequences for unprotected growers have been severe:

- Complete crop losses reported in affected greenhouse compartments, particularly in Netherlands, Spain, and Israel

- Export restrictions imposed by multiple national authorities on peppers and tomatoes from ToBRFV-positive zones

- Quarantine protocols requiring destruction of infected plant material and full greenhouse sanitation between cycles

- Significant insurance and financial exposure for operations without documented resistance deployment

The response from leading seed breeding companies has been to fast-track the development of new resistance sources. BreedX pepper breeding programs prioritize disease resistance packaging that addresses current and emerging pathogen threats – ensuring that commercial growers are not caught exposed by a resistance-breaking strain event.

How Conventional Breeding Delivers Durable Resistance

Resistance breeding in conventional (non-GMO) seed development relies on identifying natural resistance genes present in wild pepper species or landraces, then systematically introgressing those genes into elite commercial backgrounds through carefully managed crossing and selection programs. The key principles:

- Resistance gene identification: Wild Capsicum species harbor resistance mechanisms against virtually every major pepper pathogen. Breeders systematically screen wild germplasm under controlled disease challenge conditions to identify useful resistance sources

- Backcross introgression: Once a resistance donor is identified, breeders execute multi-generation backcross programs to transfer the resistance gene into elite commercial backgrounds while recovering yield, quality, and adaptation traits

- Marker-assisted selection: Modern conventional breeding programs use molecular markers linked to resistance genes to accelerate selection and confirm resistance gene presence in breeding lines – reducing the reliance on disease challenge screens at every generation

- Stacking: The most durable commercial varieties stack multiple independent resistance genes against the same pathogen, reducing the probability of resistance breaking by a mutation in the pathogen population

- Commercial trait balance: Resistance must be delivered in a variety that also meets commercial requirements for yield, fruit quality, uniformity, and shelf life – the resistance is only valuable if the variety is commercially competitive in all other dimensions

What Growers Should Ask Before Selecting a Pepper Variety

Given the economic stakes, variety selection decisions in commercial pepper production deserve rigorous evaluation. The right questions to ask a seed company or sales representative:

- Which tobamovirus strains does the variety carry resistance against — specifically Tm, Tm-2, Tm-2², and ToBRFV resistance sources?

- Is the resistance HR (High Resistance) or IR (Intermediate Resistance) — and under what conditions was it evaluated?

- Has the variety been tested under commercial disease pressure in the specific region and production system where I will be growing?

- What is the company’s protocol for monitoring resistance durability and communicating new pathogen variants to customers?

- Is the resistance package documented and verifiable — or reliant on marketing claims?

Resistance as Commercial Infrastructure

The shift in how the fresh pepper industry views disease resistance is profound. What was once considered an agronomic advantage has become the minimum viable product specification for commercial variety adoption. Retailers and packers increasingly require documented disease resistance programs as a prerequisite for grower partnerships – because a disease outbreak in a supplier’s operation directly affects the buyer’s supply continuity and food safety exposure.

For seed companies, this creates both a responsibility and an opportunity. Those that invest in comprehensive, validated resistance programs – and communicate them transparently – are building the kind of commercial trust that drives long-term grower loyalty. In a market where the next pathogen event could arrive in any growing season, resistance breeding is not just an agronomic service – it is risk management infrastructure for the entire fresh pepper supply chain.

Conclusion

Disease resistance in commercial pepper varieties is the defining technical challenge – and commercial differentiator – of the 2025 seed market. Tobamovirus, powdery mildew, and Phytophthora collectively represent billions of dollars in potential crop exposure for unprotected growing operations. The seed companies and varieties that provide validated, durable, stacked resistance while maintaining commercial productivity are providing genuine value to an industry that cannot afford the alternative.

Tech

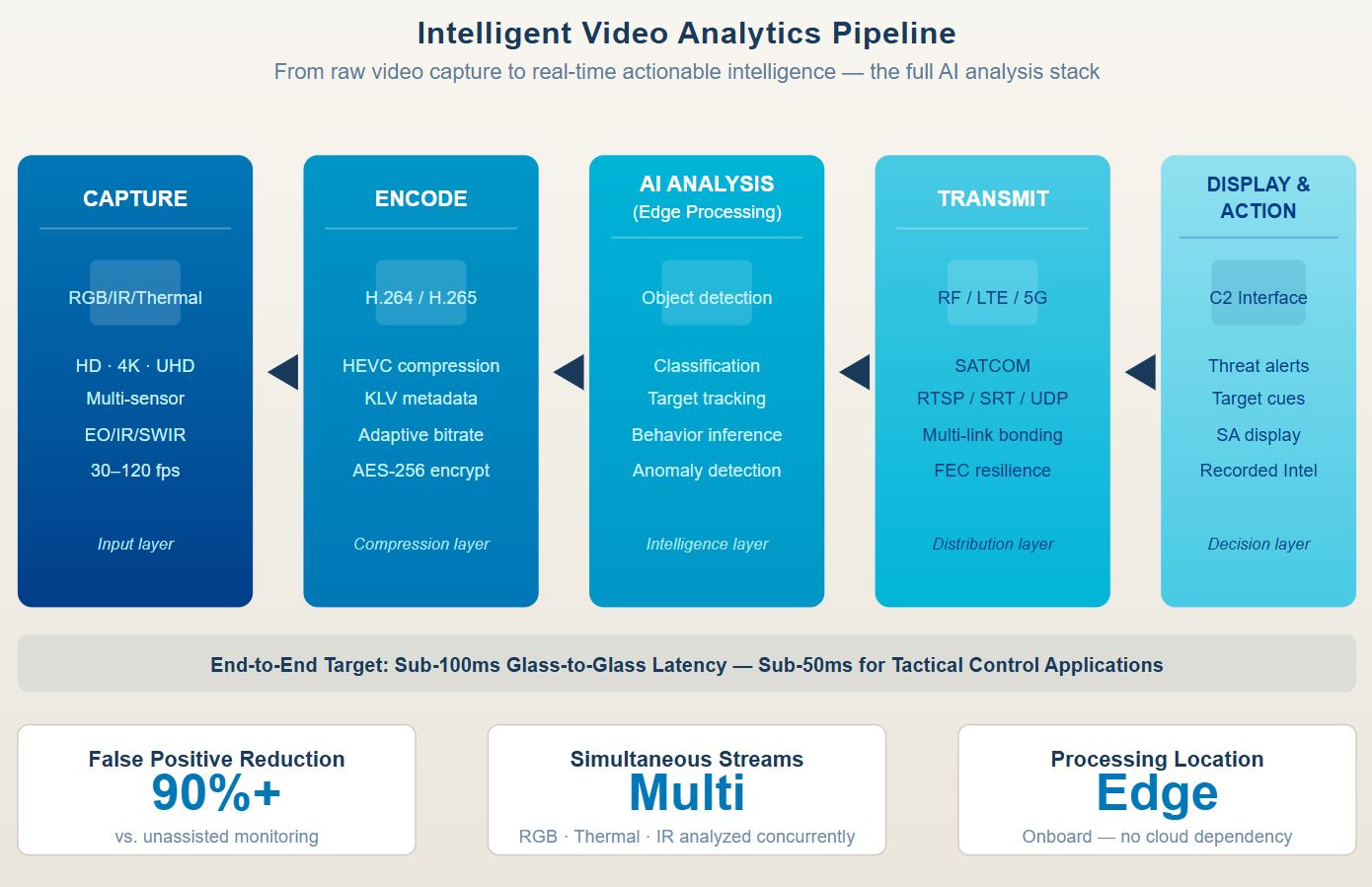

What Is Intelligent Video Analytics? A Defense and Security Guide for 2025-2026

Introduction

Raw video footage has never been the problem. The challenge – for defense forces, homeland security agencies, and commercial operators alike – is turning vast, continuous streams of video data into actionable intelligence, fast enough to matter. This is precisely what intelligent video analytics delivers: the ability to analyze video in real time, automatically detect objects and behaviors of interest, and surface relevant alerts without requiring a human operator to watch every frame. As AI capabilities have matured and edge computing has become viable on compact, ruggedized hardware, intelligent video analysis has transitioned from a niche research application to a core operational capability across defense, HLS, and critical infrastructure protection.

What Is Intelligent Video Analytics?

Intelligent video analytics (IVA) refers to the automated processing of video feeds using artificial intelligence and computer vision algorithms to extract structured, actionable information. Rather than passively recording and displaying footage, IVA systems actively analyze what the cameras see — identifying objects, classifying behaviors, tracking movement, and generating alerts when predefined conditions are met.

Modern intelligent video analysis encompasses several distinct analytical functions:

- Object detection: Identifying and locating vehicles, personnel, aircraft, or other objects within a video frame

- Object classification: Distinguishing between different categories — friendly forces vs. unknown contacts, light vehicles vs. armored vehicles, commercial aircraft vs. tactical UAVs

- Object tracking: Following a detected object across multiple frames and multiple camera feeds simultaneously

- Behavior recognition: Detecting patterns of movement or activity that indicate threat — unauthorized entry, loitering in restricted zones, convoy formation, or launch preparation

- Anomaly detection: Flagging deviations from learned baseline patterns without requiring explicit definition of every possible threat scenario

Why Intelligent Video Analytics Matters for Defense and Homeland Security

The operational case for intelligent video analysis in defense and HLS environments is straightforward but compelling. Modern surveillance architectures generate video data at volumes that exceed any human monitoring capacity. A single UAV conducting a 12-hour ISR mission generates hundreds of gigabytes of footage. A border surveillance system monitoring 100 kilometers of frontier operates continuously with no natural breaks. A force protection network around a forward operating base may run dozens of camera feeds simultaneously.

Without automation, most of this data is never meaningfully analyzed. Operators become fatigued, attention narrows, and genuinely significant events can occur during the moments when no analyst is actively watching. Intelligent video analytics addresses this directly by maintaining continuous, consistent, tireless analysis — and by alerting human operators only when something requires their attention.

The benefits are measurable:

| Operational Benefit | Impact |

|---|---|

| Reduced operator cognitive load | Human analysts focus on decisions, not monitoring |

| Faster threat detection | Millisecond AI response vs. seconds or minutes for human detection |

| Continuous coverage | No fatigue, no shift changes, no lapses in attention |

| Multi-stream analysis | A single AI system monitors dozens of feeds simultaneously |

| Searchable intelligence | Post-mission analysis with indexed object and event records |

For an independent perspective on how intelligent video analytics integrates with broader tactical situational awareness frameworks, this analysis of modern situational awareness systems provides useful operational context.

The Technology Behind Intelligent Video Analysis

Understanding what makes intelligent video analytics effective requires understanding the technology stack that powers it — from sensor to alert.

Video Capture and Encoding

The analytical pipeline begins with video capture. Camera quality, resolution, spectral range (visible, infrared, thermal), and encoding standard all affect what the AI system can extract from the footage. H.265/HEVC encoding is preferred in bandwidth-constrained environments because it maintains high visual quality at lower bitrates — ensuring that the footage arriving at the AI analysis stage contains sufficient detail for accurate detection and classification.

AI Processing at the Edge

The most significant advancement in intelligent video analysis over the past several years has been the shift from cloud-dependent processing to edge-based AI inference. Rather than transmitting raw video to a centralized server for analysis, modern systems run AI models directly on the platform that captures the video — whether that is a UAV, a ground vehicle, a fixed camera, or a soldier-worn device. This eliminates the latency inherent in round-trip transmission, enables operation in bandwidth-limited or connectivity-denied environments, and reduces the risk of intelligence interception during transmission.

Object Detection and Classification Models

Convolutional neural networks (CNNs) and transformer-based vision models form the backbone of modern IVA systems. These models are trained on labeled datasets of the object categories and behaviors relevant to the deployment context — military vehicles, aircraft types, personnel in specific configurations, or activity patterns in specific terrain types. Well-trained models operating on appropriate hardware can achieve real-time inference at 30+ frames per second.

Alert Generation and Operator Interface

The output of the AI analysis pipeline is structured data — object identities, locations, confidence scores, and behavioral classifications — that feeds into operator interfaces designed to surface the highest-priority intelligence. Effective interfaces suppress false positives, provide context for alerts, and allow operators to drill into the underlying video for confirmation.

Maris-Tech’s Intelligent Video Analytics Approach

Maris-Tech has built its entire technology stack around the thesis that meaningful intelligence must be generated at the point of collection. The company’s AI edge video processing platforms perform the full intelligent video analysis pipeline onboard UAVs, unmanned ground vehicles, armored platforms, and soldier-carried systems — without dependency on cloud connectivity or ground station processing.

The Maris approach integrates every layer of the video analytics pipeline:

- Multi-sensor acquisition covering RGB, thermal, and infrared channels

- H.264/H.265 encoding optimized for bandwidth-constrained transmission

- Onboard AI inference using hardware accelerators (including the Hailo-8 chipset) for object detection, classification, and tracking

- Real-time alert generation feeding into command-and-control interfaces

- KLV metadata embedding for geospatial context in accordance with MISB standards

This architecture is reflected in the company’s AI video analysis capabilities, which are deployed across defense, HLS, and commercial sectors globally. Field-proven with leading security organizations across Israel, Europe, North America, and Asia Pacific, Maris-Tech’s solutions are trusted in operational environments where the consequences of missed detections or false positives are measured in lives and mission outcomes.

Key Applications of Intelligent Video Analytics in 2025–2026

Intelligent video analysis is being applied across a rapidly expanding set of operational contexts:

Airborne ISR

UAVs equipped with IVA can autonomously detect and follow targets of interest across complex terrain — without requiring operators to actively track every movement. This dramatically extends the effective range of ISR missions and reduces the number of operators needed per platform.

Border and Perimeter Security

Fixed and mobile camera networks equipped with AI analysis can monitor extended frontiers 24/7, alerting security forces only when genuine incursions or anomalous behaviors are detected — filtering out false positives from wildlife, weather, or civilian movement.

Force Protection

Around forward operating bases or critical installations, intelligent video analytics provides persistent 360-degree awareness, detecting and classifying threats before they reach engagement range and cueing counter-measures or response forces.

Counter-UAS Operations

IVA systems are increasingly deployed specifically for the detection and classification of hostile UAVs — tracking swarm formations, identifying launch signatures, and supporting intercept targeting in real time.

Urban Operations

In complex urban environments, AI video analytics supports route reconnaissance, crowd monitoring, and facility security, identifying patterns of behavior that precede attacks or coordinated incursions.

According to Wikipedia’s overview of video analytics technology, the field has expanded significantly with the availability of affordable AI hardware and the maturation of computer vision models — making capabilities once reserved for the largest defense programs accessible to a much broader range of operators and applications.

Selecting an Intelligent Video Analytics System

For procurement teams and defense integrators evaluating IVA platforms, several technical criteria consistently separate operational-grade solutions from commercially-adequate alternatives:

- Detection accuracy at target ranges: What is the false positive and false detection rate at operationally relevant distances?

- Multi-stream capacity: How many simultaneous video feeds can the system analyze without degrading detection performance?

- Latency from capture to alert: End-to-end pipeline latency of under 100ms is the operational standard for real-time tactical applications

- Edge processing independence: Can the system operate effectively without persistent connectivity to a ground station or cloud server?

- Environmental qualification: Is the hardware MIL-STD-rated for vibration, temperature extremes, dust, and moisture?

- Integration with C2 systems: Does the system output structured data compatible with standard command-and-control architectures?

As intelligent video analytics continues to mature, the gap between what AI-enabled systems can detect and what human operators can manually monitor will only grow wider. Organizations that build intelligent video analysis into their surveillance and ISR architecture now will hold a substantial operational advantage over those that treat it as a future capability.

-

Business Solutions2 years ago

Business Solutions2 years agoLive Video Broadcasting with Bonded Transmission Technology

-

Business Solutions1 year ago

Business Solutions1 year agoThe Future of Healthcare SMS and RCS Messaging

-

Business Solutions2 years ago

Business Solutions2 years ago2-Way Texting Solutions from Company Message Services

-

Business Solutions2 years ago

Business Solutions2 years agoCommunication with Analog to Fiber Converters & RF Link Budgets

-

DSRC Communication1 year ago

DSRC Communication1 year agoThe Crossroads of Connectivity: DSRC vs. C-V2X Technologies in Automotive Communication

-

Electronics3 years ago

AI Modules and Smart Home Chips: Future of Home Automation

-

Business Solutions2 years ago

Business Solutions2 years agoWholesale SMS Platforms with OTP Services

-

Business Solutions2 years ago

Business Solutions2 years agoAerial Wind Turbine Inspection with Advanced Camera Drones