Cybersecurity

Maximizing Cyber Crime Investigation Opportunities

Cybercrime is steadily on the rise, and it appears that everyone knows a friend where their computer or bank balance has been compromised or hacked. Trying to find the perpetrators has often been likened to finding a needle in a haystack, blindfold!

The challenge for analysts and law enforcement is to conduct a thorough but efficient cybercrime investigation process that concludes successfully and within a time window.

Given that cybercrime can vary from fraud to human trafficking and personal information theft to cyber terrorism, the pressure is directly on the shoulders of analysts to call it right, and quickly.

Add to that, cyber threat actors are now very tech-savvy. They are highly aware of knowing how to exploit a computer’s weakness and, at the same time, cover their digital footprints to avoid exposure.

Fortunately for analysts and researchers, many cybercrime investigation tools can outpace the threat actor, track them down, identify them, extract quality data of activity and provide a timely warning of the threats.

The best web intelligence platform solutions are built with AI crime prediction tools that make sense of all the big data an analyst needs to monitor and often identify connections between threat actors, their circle, and any unusual online behavior.

Systems now come with overlaid intelligence cycles, allowing security professionals to bring together and make sense of disparate intelligence data points, creating a rich, colorful and holistic map of vulnerabilities, threats, and potential breaches.

These insights all help maintain effective protection of employees, executives, and information – whether at work, home, or anywhere along the way.

Continuous monitoring and indexing of the dark web provide seamless connectivity to thousands of dark web sources. A robust platform will also enable analysts to search people and keywords to evaluate and monitor forums and markets.

Extracting the intelligence can be tedious, and with criminals or terrorists trying to hide their past criminal history, finding threat actors’ digital footprints, which would connect the dots as well as uncover new leads, the process can be time-consuming.

But sophisticated AI algorithms are now being deployed to analyze collected data and provide deeper insights in real-time. Dark web monitoring also allows investigators to launch investigations with the smallest piece of digital forensics information, such as a threat actor’s name, location, IP address, or image.

Publicly available intelligence, such as those available through database searches that aggregate information and have a quick turnaround rate, can be cost-effective for some organizations. But open-source searches may not provide all the necessary data which a criminal investigation on the dark web can produce.

Software platforms, underpinned by methodologies that meet the requirements of law enforcement agencies across the globe, allow cases to be solved in minimum amounts of time with limited resources.

These AI-led search engines are capable of sifting through an infinite amount of critical data across all layers of the internet, including open source and the dark web, at an unmatched pace.

Using these advanced engines, officials can search for threat actors and, at a click, uncloak the identities of the threat actors; extract relevant metadata along with location history, web content history, social connections, and more.

While cybercrime is here to stay, so sophisticated digital forensics are quickly turning the tide in favor of law enforcement to be able to unmask malicious activities and the threat actors in quick time.

Cybersecurity

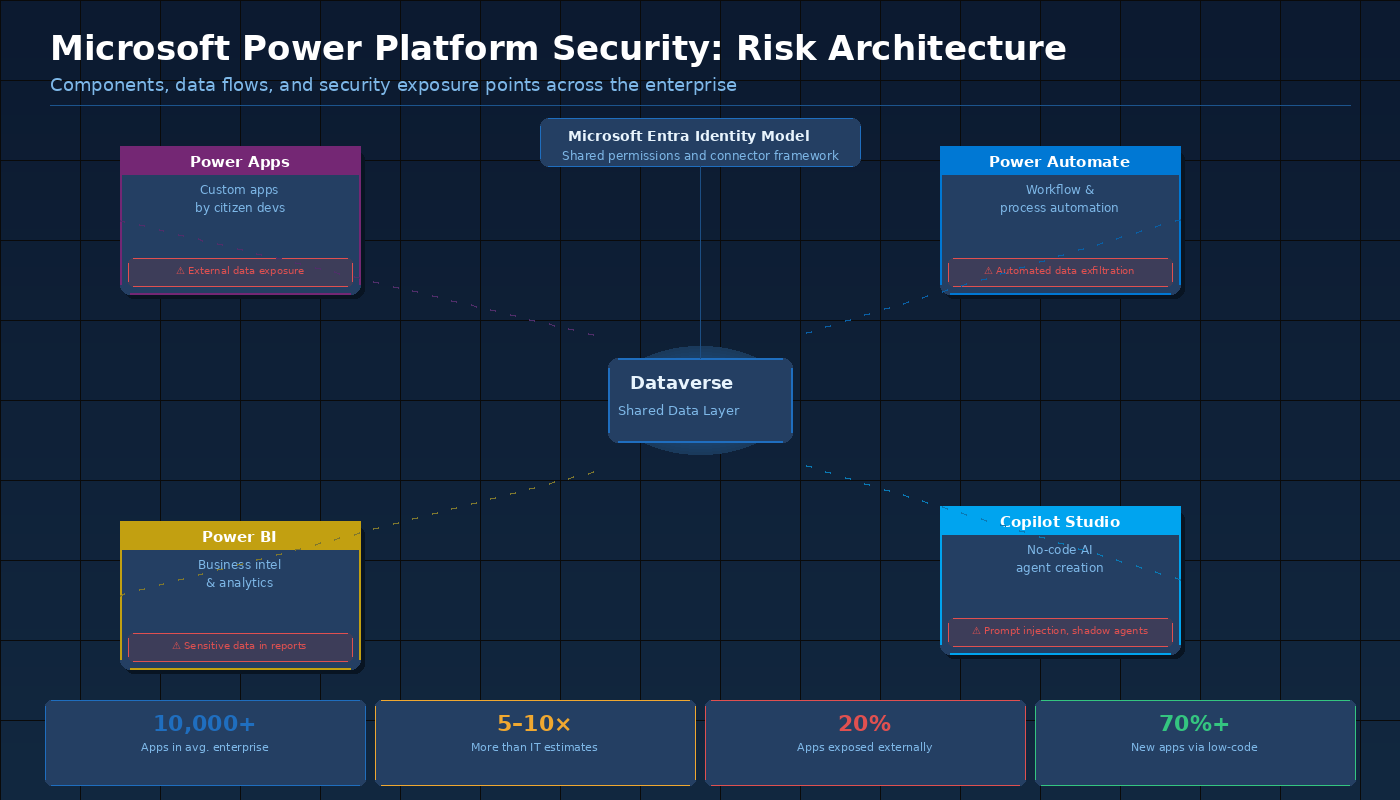

Microsoft Power Platform Security: The Risks CISOs Cannot Afford to Ignore

Microsoft Power Platform is now one of the most widely deployed technology ecosystems in the enterprise. Power Apps, Power Automate, Power BI, and Copilot Studio collectively enable millions of business users to build custom applications, automate complex workflows, analyze sensitive data, and deploy AI agents — all without writing a single line of code. The productivity gains are real and significant. The security implications are equally real — and far less often discussed.

Unlike traditional enterprise applications that pass through formal development and security review processes, Power Platform apps and automations are typically built by business users working at high speed, with limited security training and no mandatory AppSec review. The result is an ecosystem that grows faster than any security team can track, and faster than Microsoft’s native governance tools are designed to manage. This is the core challenge that purpose-built platforms for microsoft power platform security are designed to address.

Understanding the Power Platform Ecosystem

Power Platform is not a single product — it is an integrated ecosystem of tools that share a common data layer, a common connector framework, and a common identity model based on Microsoft Entra. Understanding each component’s security implications is essential for organizations seeking to govern the platform effectively:

| Component | Primary Function | Key Security Concerns |

| Power Apps | Custom business application development | External data exposure, excess permissions |

| Power Automate | Workflow and process automation | Automated data exfiltration, unvetted triggers |

| Power BI | Business intelligence and data analytics | Sensitive data in reports, oversharing dashboards |

| Copilot Studio | No-code AI agent creation | Prompt injection, shadow agents, data leakage |

| Dataverse | Shared enterprise data platform | Misconfigured access, cross-app data exposure |

The shared architecture is both the platform’s strength and a key source of security risk. Because all components operate within the same data and identity model, a misconfiguration or vulnerability in one component can cascade across the others. An overly permissive Power Automate flow, for example, can move data from Dataverse into an external system in ways that affect every app that depends on that data.

The Scale of Enterprise Power Platform Deployments

One of the most underappreciated aspects of Power Platform security is the sheer scale of typical enterprise deployments. Organizations that believe they have dozens of Power Platform apps typically have thousands. According to data from Nokod Security, the average large enterprise environment contains more than 10,000 Power Platform apps and automations — far exceeding what any team could review manually.

The scale problem is compounded by the platform’s accessibility. Because power platform security governance requires visibility across all of these assets simultaneously, manual approaches are operationally impossible at enterprise scale. Automation is not an option — it is a necessity.

Top Power Platform Security Risks

Based on real-world enterprise security assessments, the following risk patterns consistently emerge across Power Platform deployments:

- Data Leakage via Connectors: Power Automate and Power Apps connect to hundreds of third-party services via the Microsoft connector framework. Without proper Data Loss Prevention policies, sensitive data can flow to unauthorized destinations automatically, often without the app builder’s awareness.

- Excessive Sharing: Power Apps can be shared with individual users, security groups, or the entire organization. Apps shared tenant-wide expose their underlying data connections to all employees — a common misconfiguration that security teams rarely catch without automated scanning.

- Power BI Data Security: Power BI reports and dashboards often contain sensitive financial, operational, or customer data. Without row-level security and workspace governance, this data can be exposed to audiences far beyond what the report creator intended.

- Shadow Engineering: Business units build Power Platform solutions outside of IT visibility, creating a growing inventory of unmonitored apps that may expose sensitive data, violate compliance requirements, or become orphaned when their creators change roles.

- Injection Vulnerabilities: Power Apps connected to SQL databases or other data sources are vulnerable to injection attacks, particularly when input validation is handled by the app builder rather than by a trained developer.

- Supply Chain Risk: Connectors and custom APIs embedded in Power Platform solutions introduce third-party dependencies that carry their own security risks, including compromised endpoints and unauthorized data access.

Gartner has predicted that low-code/no-code development will account for more than 70% of new enterprise application activity. As Power Platform adoption accelerates, these risks will grow proportionally unless organizations implement systematic governance.

Power BI Security: A Frequently Overlooked Attack Surface

Power BI occupies a unique position in the Power Platform security landscape. Unlike Power Apps and Power Automate, which are primarily operational tools, Power BI is designed specifically for distributing data broadly across the organization. Reports and dashboards are regularly shared with large internal audiences and, in many cases, embedded in external-facing portals.

This broad distribution model creates significant Power BI data security risks. Reports may contain embedded credentials or sensitive query logic. Workspaces may be shared without appropriate access controls. Data refresh schedules may pull from production systems without proper service account governance. And premium capacity environments may lack the monitoring required to detect unusual data access patterns.

Managing these risks requires the same combination of inventory, policy enforcement, and automated monitoring that governs the broader Power Platform. For organizations seeking to address data leakage prevention across their entire Power Platform environment, a unified approach that covers all components — including Power BI — is essential.

How Nokod Addresses Power Platform Security

Nokod Security was built specifically for the low-code/no-code security challenge. Its platform connects to Power Platform environments and, within minutes, delivers a complete inventory of every app, flow, agent, and data connection — including assets that IT has never seen. From that inventory, Nokod automatically surfaces the risks that matter: excessive permissions, unauthorized sharing, connector policy violations, injection vulnerabilities, and data exposure paths.

For Power BI specifically, Nokod scans workspace configurations, sharing settings, and data access patterns to identify dashboards and reports that expose sensitive data to unintended audiences. One-click remediation options allow security teams to address identified issues at scale without requiring app-by-app manual review.

Fortune 500 companies across insurance, healthcare, and financial services have deployed Nokod to bring security rigor to their Power Platform environments. The typical finding: the actual number of apps and automations is between five and ten times larger than what IT believed existed — and a significant proportion carry high-severity security findings that require immediate remediation.

For a detailed analysis of Power Platform security risks and remediation strategies, see this comprehensive guide at techpr.online.

For background on the broader low-code security landscape, the Wikipedia article on Low-code development platform provides useful context.

Conclusion

Microsoft Power Platform has become indispensable to enterprise operations — and one of its most significant security blind spots. The combination of rapid citizen development, complex multi-component architecture, and organizational scale creates risks that manual governance processes cannot address. Nokod Security provides the automated visibility, risk detection, and remediation capabilities that Power Platform environments require — enabling organizations to accelerate digital transformation on Power Platform with confidence that the security team has the oversight the enterprise demands.

Cybersecurity

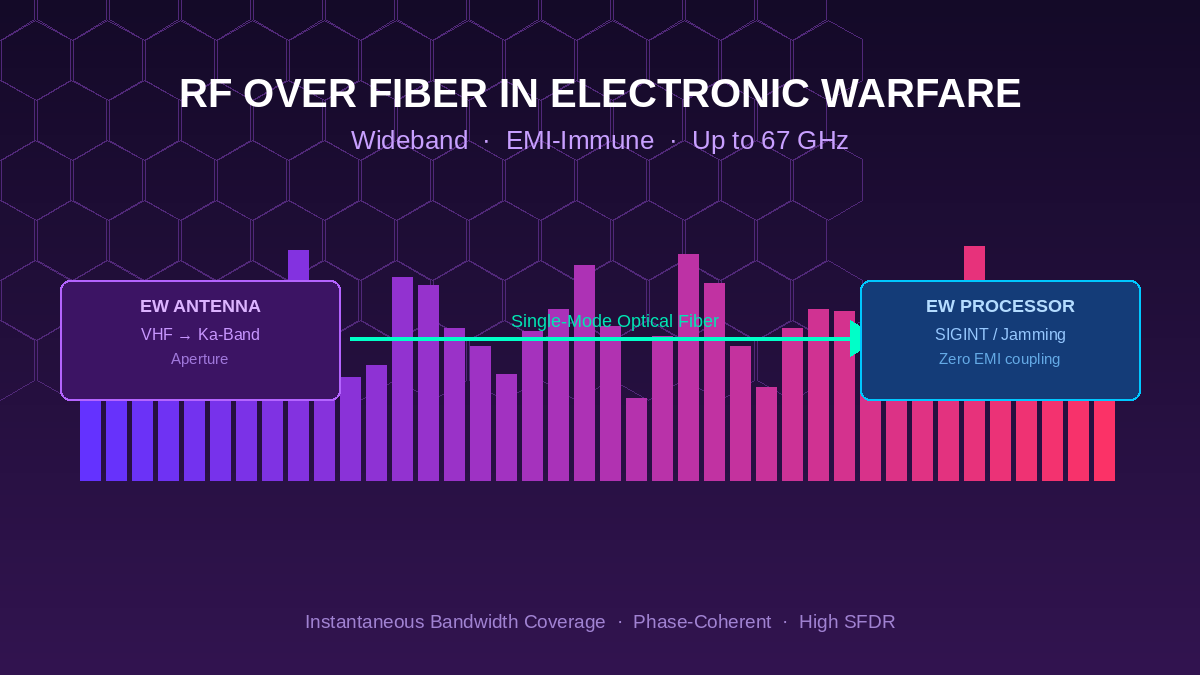

RF over Fiber in Electronic Warfare: How Optical Links Solve the EW Signal Distribution Challenge

Introduction

Electronic warfare systems operate at the intersection of high frequency, wide bandwidth, and hostile electromagnetic environments. The signals of interest span from VHF tactical communications bands through X-band and Ka-band radar frequencies, often demanding instantaneous coverage across tens of gigahertz. Connecting antennas, sensors, and processing hardware across the physical distances of a ship, aircraft, or ground vehicle while preserving signal fidelity at these frequencies has historically been one of the most demanding challenges in platform integration. RF over fiber technology for EW and radar applications has emerged as the definitive solution for this distribution problem.

Why Coaxial Cables Fail in Modern EW Environments

Coaxial cable has served as the backbone of RF signal distribution for decades. However, its limitations become severe when pushed to the demands of modern electronic warfare architectures. At frequencies above 6 GHz, high-grade coaxial cable loses approximately 100 dB or more per 100 meters, making long antenna-to-receiver runs impractical without multiple inline amplifiers. Each amplifier adds noise, non-linearity, and a potential point of failure.

Beyond attenuation, coaxial systems are intrinsically susceptible to electromagnetic interference. In an EW environment, the platform itself may be the source of powerful jamming signals, radar emissions, or electronic attack pulses. These signals couple into long coaxial runs, degrading the sensitivity and dynamic range of receive chains. Heavy copper shielding adds weight, and ground loops between equipment racks create noise floors that can obscure low-level signals of interest.

The Optical Advantage for Wideband Signal Distribution

RF over fiber (RFoF) links convert the RF signal to an optical carrier at the source (typically at the antenna aperture), transmit the modulated light through a single-mode optical fiber, and convert it back to RF at the processing point. The optical fiber itself is immune to electromagnetic interference, introduces no ground loops, weighs a fraction of comparable coaxial solutions, and supports bandwidths from DC through millimeter-wave frequencies across a single physical medium.

The frequency coverage advantage is particularly significant for EW applications. While conventional RFoF suppliers typically support frequencies to 6 GHz, high-frequency RF over fiber systems designed for EW and radar cover frequencies from below 1 GHz up to 67 GHz and beyond. This enables a single fiber link to simultaneously carry L-band GPS, S-band communications, C-band fire control radar, X-band surveillance radar, and Ka-band sensor signals, dramatically reducing the fiber count and connector complexity of multi-band EW suites.

Key Performance Parameters for EW RFoF Links

Electronic warfare applications impose specific performance requirements that go beyond what is adequate for commercial telecommunications use cases. The following parameters are particularly critical:

- Spurious-Free Dynamic Range (SFDR): EW systems must detect low-level signals in the presence of powerful nearby emitters. A high SFDR allows the analog fiber link to preserve the full dynamic range available at the antenna aperture, deferring digitization to the processing subsystem where dedicated ADC architectures can handle the burden.

- Noise Figure: The RFoF link adds noise to the received signal chain. In receive-only applications, a low-noise figure preamplifier at the antenna end can recover most of this penalty and keep the system noise figure consistent with coaxial alternatives.

- Phase Coherence: Coherent radar and electronic intelligence (ELINT) systems require multiple antenna channels to maintain precise phase relationships. Phase-matched RFoF link pairs ensure that angle-of-arrival measurements and coherent beamforming calculations remain accurate.

- Instantaneous Bandwidth: EW receivers are often required to process signals anywhere across a multi-gigahertz tuning range without prior knowledge of the signal’s frequency. A wideband fiber link that supports the full instantaneous bandwidth of the receiver avoids the need for preselector filtering that could block signals of interest.

Platform Integration: Ship, Aircraft, and Ground Vehicle Applications

The physical integration benefits of optical fiber are especially pronounced on military platforms where space and weight are at a premium. A single optical fiber with an outer diameter of 2-3 mm can replace a bundle of coaxial cables that might weigh several kilograms per meter. On large surface combatants with antenna apertures located at mast height, this translates to hundreds of kilograms of weight reduction per fiber run replaced.

On aircraft and unmanned aerial vehicles, the weight savings directly translate to increased payload, endurance, or fuel efficiency. The flexibility of optical fiber also simplifies routing through tight conduit paths and around structural members where rigid coaxial assemblies would require complex custom fabrication. Fiber runs can be field-terminated and replaced far more quickly than precision coaxial assemblies, supporting faster maintenance turnaround times.

Optical Delay Lines in EW Signal Processing

Beyond signal distribution, optical delay lines play a direct role in EW signal processing architectures. Photonic time-stretch analog-to-digital converters use chirped fiber delay elements to effectively slow down high-bandwidth RF signals before digitization. Radar warning receivers and jamming systems use precise delay lines to generate coherent responses to intercepted signals. Optical delay line solutions for EW applications provide the stable, phase-matched delays that these advanced processing architectures require, with frequency coverage that extends through Ka-band and V-band signals beyond the reach of conventional delay line technology.

Conclusion

Electronic warfare is one of the most demanding applications in the RF domain, and signal distribution quality directly determines how well a system can detect, classify, and counter threats. RF over fiber technology addresses the fundamental limitations of coaxial distribution by offering immunity to interference, dramatic weight savings, and frequency coverage that extends to millimeter-wave bands. As EW systems continue to expand their frequency coverage and require tighter integration of multiple sensor apertures, optical signal distribution will become increasingly essential to achieving the performance goals that modern defense platforms demand.

For further context on the evolving frequency landscape in defense electronics, Microwave Journal provides authoritative coverage of EW system developments and RF photonics technology.

Cybersecurity

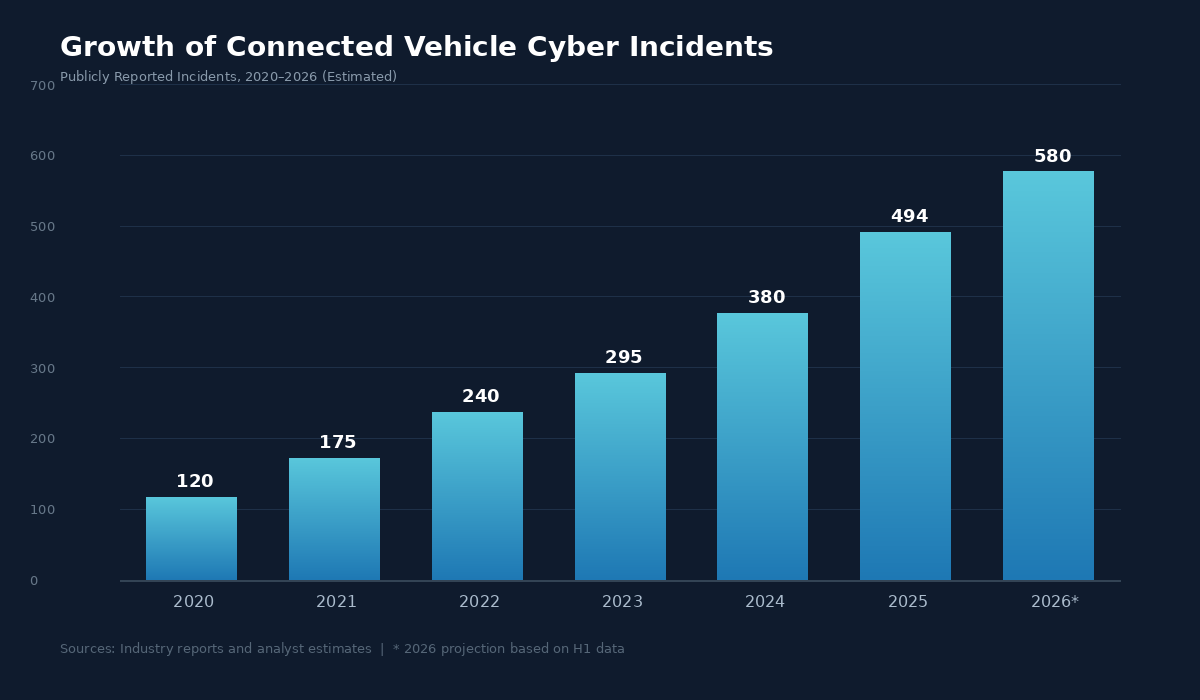

Connected Car Security in 2026: Top Threats and How Automakers Are Fighting Back

The modern vehicle is no longer simply a machine that gets you from point A to point B. Today’s cars are rolling data centers — equipped with dozens of electronic control units, over-the-air update capabilities, and constant cloud connectivity. While this transformation has delivered extraordinary convenience and safety features, it has also created a vast new attack surface for cybercriminals. As we move deeper into 2026, connected car security has become one of the most critical priorities for automakers, fleet operators, and regulators worldwide.

A growing body of research confirms the scale of the problem. Industry analysts documented nearly 500 publicly reported automotive cybersecurity incidents across the mobility ecosystem in 2025 alone, a sharp year-over-year increase that shows no signs of slowing. Remote attacks — carried out over cellular, Wi-Fi, and Bluetooth interfaces — now account for the vast majority of these incidents, underscoring how the connected nature of modern vehicles has fundamentally changed the threat landscape.

Why Connected Car Security Is More Urgent Than Ever

Several converging trends are amplifying cybersecurity risk in the automotive sector. First, the number of connected vehicles on the road continues to climb rapidly. Estimates suggest there are now well over 400 million connected cars in active use globally, each one a potential target. Second, the rise of software-defined vehicles (SDVs) means that an increasing share of a car’s functionality — from braking to infotainment — depends on software that can be updated, modified, or compromised remotely.

Third, the financial incentives for attackers have grown. Keyless car theft, which exploits vulnerabilities in CAN bus communication protocols and relay attack vectors, has become a widespread problem in markets across Europe, North America, and Asia. According to law enforcement data, vehicles equipped with keyless entry systems are disproportionately targeted, with some models experiencing theft rates many times higher than their conventional counterparts.

The regulatory environment is also tightening. The UNECE WP.29 regulations — specifically UNR 155, which mandates cybersecurity management systems for all new vehicle types — have raised the compliance bar significantly. OEMs that fail to meet these standards risk being unable to sell vehicles in major markets.

The Most Common Connected Car Attack Vectors

Understanding where the vulnerabilities lie is the first step toward effective protection. The primary attack vectors targeting connected vehicles today include:

| Attack Vector | Description | Risk Level |

| CAN Bus Injection | Attackers send malicious commands through the vehicle’s internal Controller Area Network | Critical |

| Relay/Keyless Entry Attacks | Signal amplification tricks used to unlock and start vehicles without the physical key | High |

| Telematics & OTA Exploits | Compromising cloud-connected telematics units or intercepting over-the-air software updates | High |

| Infotainment Breaches | Exploiting vulnerabilities in entertainment systems to pivot into safety-critical networks | Medium–High |

| V2X Communication Spoofing | Injecting false data into vehicle-to-everything communication channels | Emerging |

Each of these vectors requires a different defensive strategy, which is why the industry has increasingly moved toward unified, platform-level security approaches rather than piecemeal point solutions.

Automotive Cybersecurity Best Practices Driving the Industry Forward

Leading OEMs and Tier 1 suppliers have begun adopting a set of cybersecurity best practices that are rapidly becoming the standard for the industry. These include:

Security-by-design architectures. Rather than bolting on security after the fact, forward-thinking manufacturers are embedding AI-powered cybersecurity directly into the vehicle’s electronic architecture from the earliest design stages. This “shift left” approach catches vulnerabilities before they reach production.

Intrusion detection and prevention systems (IDPS). In-vehicle IDPS solutions monitor network traffic across CAN, Ethernet, and other protocols in real time, detecting and blocking anomalous behavior before it can escalate. Advanced solutions filter noise at the edge, reducing the volume of data that needs to be transmitted to cloud-based security operations centers.

Vehicle Security Operations Centers (VSOCs). Cloud-based VSOCs aggregate data from millions of vehicles to detect fleet-wide attack patterns, correlate threat intelligence, and coordinate incident response. The combination of edge detection and cloud analytics creates a defense-in-depth model that mirrors best practices from enterprise IT security.

Automated DevSecOps. Security testing — including fuzz testing and software bill of materials (SBOM) vulnerability scanning — is being integrated directly into CI/CD pipelines, ensuring that every software release is vetted before deployment.

Regulatory compliance frameworks. Aligning with ISO/SAE 21434 and UNR 155 provides a structured approach to managing cybersecurity risk across the entire vehicle lifecycle, from concept through decommissioning.

How the Industry’s Leaders Are Responding

Among the companies at the forefront of connected car security, PlaxidityX (formerly Argus Cyber Security) stands out for its unified Vehicle Detection and Response (VDR) platform. With over 70 million vehicles protected and more than 80 production projects globally, PlaxidityX offers an architecture-agnostic solution that secures the vehicle from the edge to the cloud. Their approach — combining embedded in-vehicle agents with cloud-based analytics — directly addresses the challenge of vendor sprawl that has plagued many OEM security programs.

The company’s active keyless theft prevention technology is particularly notable: an embedded agent neutralizes CAN injection and relay attacks in milliseconds at the edge, before the engine starts. This capability can be offered as a premium subscription service, transforming cybersecurity from a pure cost center into a revenue-generating feature — a shift that is reshaping how OEMs think about the business of vehicle security.

What Comes Next for Connected Vehicle Protection

Looking ahead, the convergence of AI and automotive cybersecurity promises to accelerate both offensive and defensive capabilities. Machine learning models will become more adept at identifying zero-day threats in real time, while attackers will similarly leverage AI to automate vulnerability discovery. The arms race will favor those manufacturers who invest early in comprehensive, continuously updated security platforms.

For fleet operators, the stakes are equally high. A single compromised vehicle can serve as a gateway to an entire fleet’s data and operational systems. Solutions that combine intelligent edge filtering with centralized SOC monitoring will be essential for managing risk at scale.

The era of the connected car has delivered remarkable innovation. Ensuring that innovation remains safe and secure will require sustained investment, industry collaboration, and a commitment to treating cybersecurity not as an afterthought, but as a foundational element of every vehicle that rolls off the production line.

For further reading on how the UNECE WP.29 regulation is reshaping automotive compliance requirements, consult the United Nations Economic Commission for Europe’s public documentation.

-

Business Solutions2 years ago

Business Solutions2 years agoLive Video Broadcasting with Bonded Transmission Technology

-

Business Solutions12 months ago

Business Solutions12 months agoThe Future of Healthcare SMS and RCS Messaging

-

Business Solutions2 years ago

Business Solutions2 years ago2-Way Texting Solutions from Company Message Services

-

Business Solutions2 years ago

Business Solutions2 years agoCommunication with Analog to Fiber Converters & RF Link Budgets

-

DSRC Communication1 year ago

DSRC Communication1 year agoThe Crossroads of Connectivity: DSRC vs. C-V2X Technologies in Automotive Communication

-

Electronics2 years ago

AI Modules and Smart Home Chips: Future of Home Automation

-

Business Solutions2 years ago

Business Solutions2 years agoWholesale SMS Platforms with OTP Services

-

Business Solutions2 years ago

Business Solutions2 years agoAerial Wind Turbine Inspection with Advanced Camera Drones