Business Solutions

Exploring TOPS in AI and Its Impact on Industrial Automation

In an era where technology evolves at lightning speed, the intersection of artificial intelligence and industrial automation is a thrilling frontier that promises to revolutionize how industries operate. Enter TOPS—Tera Operations Per Second—a game-changing metric that’s reshaping our understanding of computational power and efficiency in AI applications. As businesses seek smarter, faster, and more efficient solutions, TOPS stands as a beacon guiding them through the complex landscape of AI-driven automation. In this blog post, we’ll dive deep into what TOPS means for industries across the globe, explore its groundbreaking implications for productivity and innovation, and uncover how it’s paving the way for a future where machines not only assist but also autonomously adapt to ever-changing environments. Buckle up as we embark on this enlightening journey into the heart of AI’s impact on industrial automation!

Artificial intelligence (AI) has become a cornerstone in transforming industrial automation, bringing about unprecedented levels of efficiency, accuracy, and productivity. One of the key metrics to evaluate AI performance is TOPS (Tera Operations Per Second). Understanding what is TOPS in AI and how it influences AI for industrial automation is crucial for leveraging these technologies to their full potential. This article delves into the significance of TOPS, its impact on industrial automation, and the future trends shaping this synergy.

Understanding TOPS in AI

TOPS, or Tera Operations Per Second, is a metric used to measure the processing power of AI systems. It indicates the number of trillion operations that an AI processor can perform in one second. High TOPS values are essential for handling complex computations and large datasets, which are common in AI applications. In industrial automation, where real-time data processing and decision-making are critical, having a high TOPS capability ensures that AI systems can operate efficiently and effectively.

TOPS is particularly important for tasks that require rapid processing of vast amounts of data, such as image recognition, predictive maintenance, and real-time monitoring. The higher the TOPS, the more capable the AI system is in managing these demanding tasks, leading to improved performance and outcomes in industrial settings.

The Role of AI in Industrial Automation

AI applications in industrial automation are revolutionizing how industries operate. From predictive maintenance to quality control, AI enables more efficient and accurate processes. By integrating AI, industries can automate routine tasks, reduce human error, and optimize resource allocation. AI-driven systems can analyze data in real-time, predict equipment failures, and provide actionable insights, which enhances operational efficiency and reduces downtime.

Moreover, AI enhances the flexibility of industrial automation systems, allowing them to adapt to changing conditions and demands. This adaptability is crucial for industries that require high levels of customization and precision, such as automotive manufacturing and pharmaceuticals. By leveraging AI, these industries can achieve higher productivity and maintain competitive advantages in their respective markets.

How TOPS Enhances AI Performance

TOPS is a critical measure of AI performance because it directly impacts the processing speed and efficiency of AI algorithms. High TOPS values enable AI systems to perform complex calculations quickly, which is essential for real-time applications. In industrial automation, this means that AI can process sensor data, control machinery, and make decisions without delays, leading to smoother and more reliable operations.

For instance, in a production line, AI systems with high TOPS can detect defects in products in real-time, allowing for immediate corrective actions. This rapid response helps in maintaining product quality and reducing waste. Additionally, high TOPS capabilities support advanced machine learning models that can predict maintenance needs, optimize production schedules, and improve overall system performance.

Key AI Technologies Utilizing High TOPS

Several AI technologies benefit significantly from high TOPS, particularly those used in industrial automation. Machine learning and deep learning algorithms, which require extensive computational power, perform better with high-TOPS processors. These algorithms are used for tasks such as predictive maintenance, quality control, and robotics.

For example, convolutional neural networks (CNNs) used in image recognition applications require high TOPS to process images quickly and accurately. In industrial automation, CNNs can be used to inspect products on a production line, identifying defects or deviations from the norm. Similarly, recurrent neural networks (RNNs) used in predictive analytics rely on high TOPS to analyze time-series data and forecast equipment failures.

Challenges of Implementing High-TOPS AI in Industrial Automation

Implementing high-TOPS AI in industrial automation comes with its challenges. Technical challenges include the need for robust infrastructure to support high computational power and ensuring compatibility with existing systems. Additionally, the cost of high-TOPS AI processors can be a barrier for some industries.

Logistical challenges involve integrating AI into existing workflows without disrupting operations. This requires careful planning and a clear understanding of the specific needs of the industry. Training personnel to operate and maintain high-TOPS AI systems is also crucial for successful implementation.

Solutions to these challenges include investing in scalable infrastructure, adopting open standards for compatibility, and providing comprehensive training programs for employees. Collaboration with AI vendors and experts can also help industries overcome these challenges and fully leverage the benefits of high-TOPS AI.

Future Trends: TOPS and AI in Industrial Automation

The future of TOPS and AI in industrial automation is promising, with several emerging trends poised to enhance their impact. One such trend is the development of AI processors specifically designed for industrial applications. These processors will offer even higher TOPS, optimized for the unique demands of industrial environments.

Another trend is the integration of AI with edge computing, which brings processing power closer to the data source. This reduces latency and enhances real-time decision-making capabilities. Additionally, advancements in machine learning algorithms will enable more efficient use of TOPS, making AI systems even more powerful and effective.

Predictions for the future include widespread adoption of AI-driven autonomous systems in industrial automation. These systems will rely on high-TOPS processors to perform complex tasks with minimal human intervention. The continuous improvement of AI and TOPS technology will drive innovation and growth in the industrial sector, leading to smarter, more efficient operations.

Comparing TOPS with Other AI Performance Metrics

While TOPS is a crucial metric for evaluating AI performance, other metrics such as FLOPS (Floating Point Operations Per Second) and MACs (Multiply-Accumulate Operations Per Second) are also used. FLOPS measures the computational speed of AI processors, while MACs assess the efficiency of specific operations within AI algorithms.

Each metric has its advantages and limitations. TOPS is particularly useful for applications requiring high-speed data processing, such as real-time monitoring and control. FLOPS is often used in scientific computing and research, where precision and accuracy are paramount. MACs are valuable for evaluating the performance of specific AI models and algorithms.

Comparing these metrics helps industries choose the right AI processors for their specific needs. High-TOPS processors are ideal for industrial automation applications that require rapid data processing and real-time decision-making. By understanding the strengths and limitations of each metric, industries can make informed decisions about AI adoption and implementation.

Leveraging TOPS for Real-Time Decision Making

Real-time data processing is crucial for industrial automation, where timely and accurate decisions can significantly impact efficiency and safety. High-TOPS AI systems excel in real-time applications, enabling faster and more precise decision-making.

For example, in a chemical plant, high-TOPS AI can monitor and control production processes in real-time, ensuring optimal conditions and preventing hazardous situations. The AI system can process data from sensors, detect anomalies, and adjust parameters immediately, enhancing safety and productivity.

By leveraging high-TOPS AI, industries can achieve better outcomes in real-time applications, improving overall operational performance. The ability to process data quickly and make informed decisions in real-time is a significant advantage of high-TOPS AI systems.

Ethical Considerations and Security in High-TOPS AI Systems

As with any advanced technology, deploying high-TOPS AI systems raises ethical and security concerns. Ensuring the ethical use of AI involves addressing issues such as data privacy, bias in AI algorithms, and the potential impact on employment.

High-TOPS AI systems must be designed and implemented with robust security measures to protect against cyber threats. This includes encryption of data, regular security audits, and the use of secure communication protocols. Ensuring the integrity and confidentiality of data is paramount in industrial automation, where breaches can have severe consequences.

Ethical considerations also involve transparency in AI decision-making processes and accountability for AI-driven actions. Industries must ensure that AI systems are fair, unbiased, and used responsibly. Implementing ethical guidelines and best practices can help mitigate risks and build trust in high-TOPS AI systems.

Conclusion

The integration of high-TOPS AI systems in industrial automation is transforming the industry, offering numerous benefits in terms of efficiency, safety, and productivity. Understanding what TOPS is in AI and how it impacts industrial automation is crucial for leveraging these technologies to their full potential.

The future of AI and TOPS in industrial automation is bright, with emerging trends and advancements promising to further revolutionize the sector. By adopting high-TOPS AI technologies, industries can achieve higher levels of operational performance and innovation. Embracing these technologies will drive the future of industrial operations, leading to smarter, more responsive systems that enhance productivity and sustainability. As we move forward, it is essential to balance technological advancements with ethical considerations and security measures to fully realize the benefits of high-TOPS AI in industrial automation.

FAQs for TOPS in AI and Industrial Automation

- What is TOPS in AI?

TOPS, or Tera Operations Per Second, is a metric used to measure the processing power of AI systems. It indicates the number of trillion operations that an AI processor can perform in one second, which is crucial for handling complex computations and large datasets.

- How does TOPS affect AI performance in industrial automation?

High TOPS values enhance AI performance by enabling faster and more efficient data processing. This is essential for real-time applications in industrial automation, such as real-time monitoring, predictive maintenance, and quality control, where rapid processing and decision-making are critical.

- What are the benefits of integrating AI in industrial automation?

Integrating AI in industrial automation improves efficiency, accuracy, and productivity. AI enables automation of routine tasks, reduces human error, optimizes resource allocation, and provides real-time insights, which enhances overall operational performance.

- Which AI technologies utilize high TOPS?

AI technologies such as machine learning, deep learning, convolutional neural networks (CNNs), and recurrent neural networks (RNNs) benefit significantly from high TOPS. These technologies are used in applications like predictive maintenance, quality control, and robotics in industrial automation.

- Can you provide an example of high-TOPS AI in manufacturing?

A leading automotive company integrated high-TOPS AI processors into its production lines to enhance quality control and predictive maintenance. The AI system analyzed images of car parts in real-time, detecting defects with high accuracy, resulting in a 30% reduction in defective products and decreased downtime.

- What challenges are associated with implementing high-TOPS AI in industrial automation?

Challenges include the need for robust infrastructure, ensuring system compatibility, high costs of AI processors, integrating AI into existing workflows, and training personnel to manage and maintain the technology. Solutions include scalable infrastructure, open standards, and comprehensive training programs.

- What are the future trends for TOPS and AI in industrial automation?

Future trends include the development of AI processors specifically designed for industrial applications, integration of AI with edge computing, and advancements in machine learning algorithms. These trends promise enhanced real-time decision-making, increased efficiency, and the adoption of autonomous systems.

Business Solutions

International Air Freight for Technology Equipment: Why Speed and Compliance Are Non-Negotiable

In the world of global technology supply chains, timing is everything. A delayed server rack at a data center construction site means weeks of idle workers and escalating costs. A stalled shipment of networking equipment halts an entire enterprise rollout. For the IT industry, air freight is not simply a logistical option — it is the backbone of mission-critical global deployments.

This article explores the role of international air freight services in technology supply chains, the key challenges involved, and how specialized logistics providers deliver speed, security, and compliance when it matters most.

Why Air Freight Dominates Technology Hardware Logistics

Technology hardware has unique characteristics that make air cargo the preferred mode of transport over sea or road freight. IT equipment — from server racks and telecom base stations to cybersecurity appliances and GPU clusters — is high-value, often time-sensitive, and sometimes subject to tight project delivery windows.

The table below illustrates how air freight compares to alternative modes for technology hardware shipments:

| Factor | Air Freight | Sea/Land Freight |

| Speed | 1–5 days | 2–6 weeks |

| Cost | Higher per kg | Lower per kg |

| Suitability (IT Hardware) | Excellent | Moderate |

| Security | High (controlled handling) | Variable |

| Customs Control | Streamlined (fewer stops) | Multiple transit points |

| Ideal for | Mission-critical, time-sensitive | Bulk, cost-sensitive cargo |

For technology companies managing global deployments across multiple countries simultaneously, air freight offers the one thing no other mode can — reliable, predictable delivery times. When a data center needs to go live on a specific date, air cargo is the only option that provides that assurance.

Key Challenges in Air Freight for IT Equipment

Despite its speed advantages, international air freight for technology hardware comes with significant operational complexity. Companies that underestimate these challenges often encounter costly delays at exactly the wrong moment.

- Customs and compliance — each country imposes different import requirements for IT and telecom equipment, including certifications, permits, and encryption declarations

- Dual-use export controls — certain categories of IT hardware (encryption devices, high-performance chips, radio frequency equipment) may require export licenses

- Dangerous goods regulations — lithium batteries, capacitors, and other electronic components may be subject to IATA dangerous goods rules

- Last-mile coordination — air freight delivers to airport facilities; reaching the final site often requires dedicated import-side logistics infrastructure

- Documentation accuracy — a single error on a customs invoice can result in shipment holds lasting days or weeks in certain countries

These challenges underscore why companies shipping technology hardware internationally need specialized logistics partners — not general freight forwarders who lack industry-specific knowledge.

The Role of the Importer of Record in Air Freight

One of the most critical components of a successful international air freight shipment is having the right Importer of Record (IOR) in the destination country. The IOR assumes legal responsibility for the import, ensuring customs clearance proceeds correctly and without penalties.

For technology companies without local entities in destination markets, working with an IOR provider is essential. The IOR handles all customs documentation, pays duties and taxes, obtains any required import permits, and ensures the shipment is released and delivered to the final address.

GetWay Global provides integrated IOR services alongside its air freight operations, enabling clients to manage the full door-to-door journey through a single provider. Learn more about GetWay Global’s importer of record services for global technology hardware.

Time-Critical Air Freight: When Every Hour Counts

The technology sector frequently generates scenarios where standard air freight timelines are not fast enough. Network outages, equipment failures, and emergency infrastructure deployments can require same-day or next-flight-out logistics solutions.

Time-critical air freight services offer:

- Next-flight-out (NFO) booking for urgent cargo

- 24/7 operations support for emergency shipment management

- Pre-clearance coordination to minimize customs processing times

- Direct connections with airline priority cargo handling

- Dedicated tracking and proactive exception management

GetWay Global specializes in time-critical deliveries as part of its core service offering, particularly for IT hardware deployments where project timelines are non-negotiable. The company operates with a 24-hour SLA support framework to ensure urgent shipments are handled at the highest priority.

Regional Air Freight Considerations

Different regions present different challenges and opportunities for air freight in the technology sector:

- Latin America — high customs complexity in Brazil and Argentina requires advance planning and specialist IOR support; air cargo from Europe or North America can arrive in 1–2 days but may face 5–10 days of clearance without proper documentation

- Middle East — strong growth in UAE and Saudi Arabia’s digital infrastructure creates high demand for air cargo; Dubai acts as a major regional hub for distribution across Gulf states

- Asia — China, India, and Southeast Asia are the world’s largest manufacturers and importers of IT hardware; air freight enables rapid redistribution and emergency stock movements

- Europe — the EU single market simplifies intra-European movements, but non-EU countries require full customs compliance at each border

Sustainability in Air Freight Logistics

As technology companies face increasing pressure to reduce their carbon footprints, air freight sustainability has become a key topic. Sustainable Aviation Fuel (SAF) programs are being introduced by major carriers, and logistics providers are increasingly offering carbon offset options as part of their service portfolios.

Forward-thinking logistics companies are also optimizing consolidation strategies — combining multiple smaller shipments into single aircraft loads — to reduce emissions per unit shipped. This approach benefits technology companies managing distributed deployments across multiple customer sites.

Conclusion

International air freight for technology equipment demands more than cargo capacity — it requires regulatory knowledge, customs expertise, and a reliable network of on-the-ground partners. GetWay Global delivers exactly this combination, providing air freight services integrated with IOR capabilities, warehousing, and last-mile delivery across the world’s most complex markets.

For technology companies managing global deployments, partnerships with specialists who understand both the logistics and the compliance dimensions of international air cargo are no longer optional — they are a competitive necessity.

For further reading on logistics technology trends, visit https://alltechnews.medium.com/.

Business Solutions

Modern Breeding for Better Fresh Pepper Crops

Take a bite of a vibrant red pepper and you’re tasting the result of decades of agricultural innovation. Modern pepper breeding has transformed how farmers grow peppers and how consumers experience them, leading to a new generation of fresh pepper varieties that combine flavor, durability, and visual appeal. As global demand for fresh produce grows, breeders are working continuously to develop peppers that perform well in the field while delivering the taste and quality shoppers expect.

Across grocery stores and farmers markets worldwide, peppers are valued for their color, sweetness, and versatility. Whether used in salads, roasted dishes, or eaten raw as a snack, peppers remain one of the most popular vegetables in fresh markets. To keep pace with rising consumer expectations and environmental challenges, plant breeders are improving pepper genetics to produce crops that are both productive and resilient.

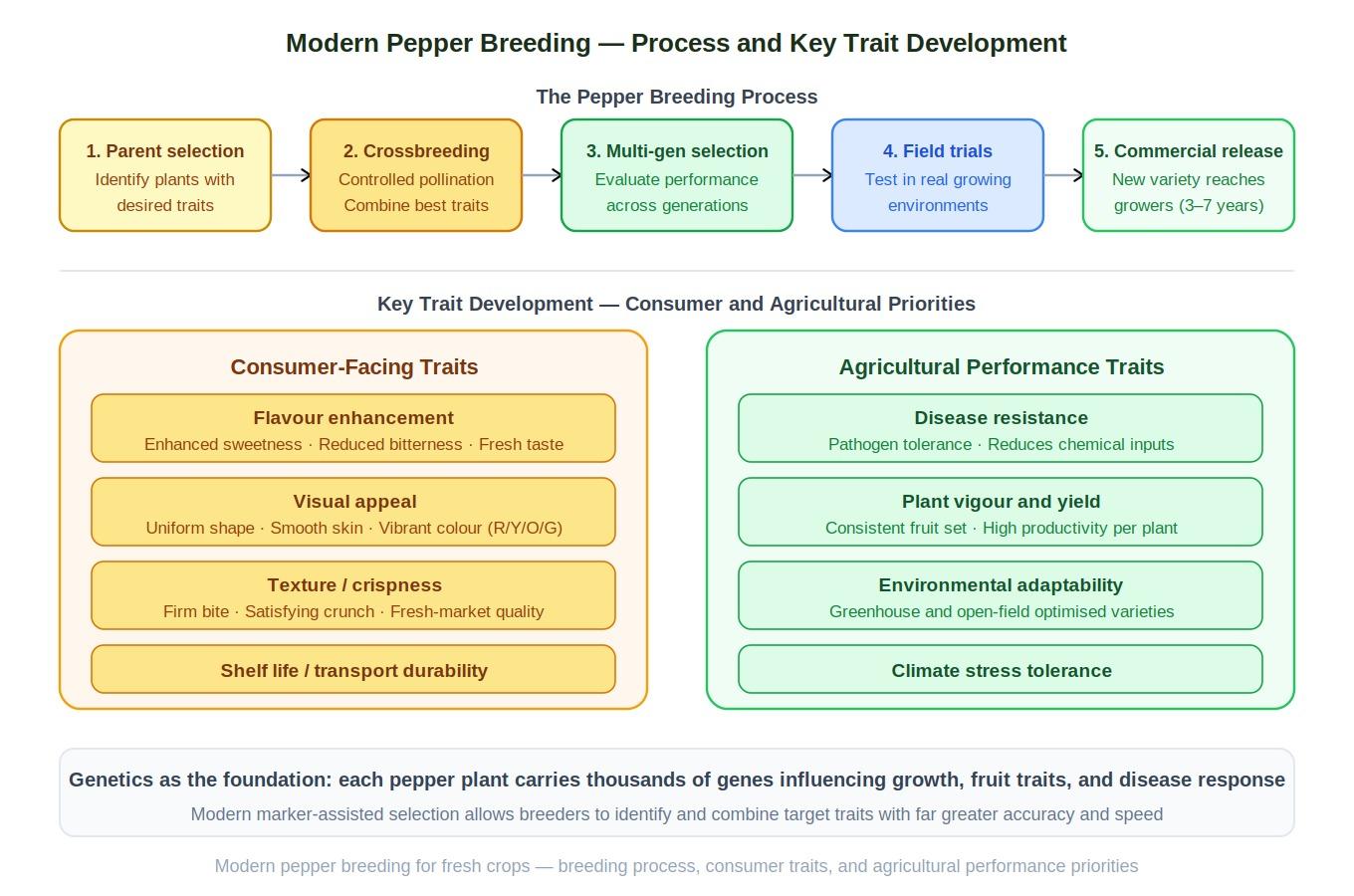

What Pepper Breeding Involves

Pepper breeding is the scientific process of developing new pepper varieties by selecting plants with desirable traits and combining them through controlled crossbreeding. The goal is to produce plants that offer improved performance for both farmers and consumers.

Breeders begin by identifying parent plants that possess valuable characteristics such as strong growth, attractive fruit shape, or exceptional flavor. These plants are crossbred to produce offspring that combine the best traits of both parents.

The resulting plants are evaluated over multiple generations. Breeders observe factors such as plant vigor, fruit quality, disease resistance, and yield. Only the strongest plants are selected for further breeding.

This process requires patience and precision, often taking several years before a new pepper variety reaches the commercial market.

Key Traits in Modern Fresh Pepper Development

Modern breeding programs focus on a range of traits that determine whether a fresh pepper variety will succeed in the marketplace. Flavor is one of the most important characteristics, as consumers increasingly expect vegetables that deliver strong taste and freshness.

Appearance also plays a significant role. Uniform shape, smooth skin, and vibrant color help peppers stand out on grocery shelves and appeal to shoppers.

Breeders also prioritize shelf life and transport durability. Peppers that remain firm and fresh during shipping help reduce waste and ensure consistent quality across supply chains.

By combining these characteristics, breeders create peppers that satisfy both agricultural performance requirements and consumer expectations.

Flavor, Color, and Consumer Appeal

Consumer preferences strongly influence breeding priorities. Over time, breeding programs have developed peppers with enhanced sweetness and reduced bitterness, making them more appealing for raw consumption.

Color diversity is another important factor. Fresh peppers appear in a wide range of shades, including green, red, yellow, orange, and even purple. These colors not only add visual appeal but also indicate different stages of ripeness and nutritional content.

Texture is equally important. Crispness is a hallmark of high-quality peppers, particularly for varieties intended to be eaten fresh.

By understanding how consumers evaluate produce, breeders can develop pepper varieties that deliver an enjoyable eating experience while maintaining agricultural reliability.

Agricultural Performance and Grower Needs

Farmers depend on crops that are reliable and efficient to grow. Pepper breeding therefore emphasizes traits that improve plant performance in real-world agricultural environments.

Disease resistance is one of the most important agricultural traits. Many pepper crops are vulnerable to plant pathogens that can reduce yield and quality. Breeding resistant varieties helps protect crops and reduces the need for chemical treatments.

Plant vigor and productivity are also critical. Strong plants with consistent fruit production allow farmers to maximize harvests while maintaining stable supply levels.

Adaptability to different growing environments is another key factor. Some pepper varieties are optimized for greenhouse cultivation, while others perform better in open-field agriculture.

Genetics and Innovation in Pepper Breeding

Genetics forms the foundation of modern crop improvement. Each pepper plant contains thousands of genes that influence its growth, fruit characteristics, and resistance to environmental stress.

By studying these genes, breeders can identify which plants carry traits that improve crop performance. Genetic diversity among pepper varieties provides a rich pool of characteristics that breeders can combine to create improved plants.

Advances in genetic research have dramatically accelerated breeding programs. Scientists can now identify genetic markers associated with valuable traits such as disease resistance or fruit sweetness.

This knowledge helps breeders focus on the most promising plant combinations, reducing the time required to develop new varieties.

Technology Accelerating Crop Development

Technological advancements have transformed the breeding process. Modern breeding programs often incorporate genomic analysis, digital imaging systems, and advanced data analytics.

Genomic tools allow researchers to analyze plant DNA and identify genes responsible for specific traits. This information helps guide breeding decisions and speeds up the development of new pepper varieties.

Digital phenotyping tools allow scientists to monitor plant growth and fruit development using automated imaging systems. These technologies provide detailed insights into how plants respond to environmental conditions.

By combining traditional breeding knowledge with advanced technology, researchers can develop improved pepper crops more efficiently than ever before.

Sustainability in Fresh Pepper Agriculture

Sustainability has become a central concern in modern agriculture. Breeding programs play a crucial role in helping farmers produce crops more efficiently while reducing environmental impact.

Improved pepper varieties may require less water, fewer fertilizers, and reduced pesticide use compared to older varieties. These traits support environmentally responsible farming practices.

Breeding also helps create plants that tolerate challenging conditions such as heat, drought, or soil variability. These improvements allow farmers to maintain productivity even as climate conditions change.

Sustainable crop development ensures that agriculture can continue providing nutritious food while protecting natural resources.

The Future of Fresh Pepper Breeding

The future of pepper breeding will likely involve even more advanced scientific tools. Artificial intelligence is beginning to assist researchers in analyzing complex genetic data and predicting plant performance.

Climate resilience will remain a key priority as breeders work to develop crops capable of thriving in increasingly unpredictable environmental conditions.

Breeding programs will also continue exploring specialty pepper varieties that appeal to evolving consumer preferences. These may include peppers with unique shapes, flavors, or enhanced nutritional content.

As agricultural science progresses, fresh peppers will continue evolving into crops that meet the needs of both farmers and consumers.

Conclusion

Fresh peppers may appear simple, but the science behind them is remarkably complex. Through careful selection, genetic research, and technological innovation, breeders have transformed peppers into highly adaptable and productive crops.

Pepper breeding continues to drive improvements in crop performance, helping farmers produce reliable harvests while delivering flavorful produce to consumers.

As agricultural challenges evolve, modern breeding programs will remain essential for developing the next generation of fresh pepper varieties that support sustainable and resilient food systems.

Business Solutions

Drone-UAV RF Communication: The Backbone of Modern Aerial Operations

Drone-UAV RF Communication is revolutionizing the way drones operate, serving as the foundation for reliable, efficient, and innovative aerial systems. From ensuring seamless connectivity to enabling advanced maneuvers, this technology plays a pivotal role in modern drone operations. Its ability to provide consistent and secure communication is what makes it indispensable for both commercial and defense applications.

Unmanned Aerial Vehicles (UAVs), commonly known as drones, have become a pivotal technology across industries such as defense, agriculture, logistics, and surveillance. At the core of a drone’s functionality is its communication system, which enables control, data transfer, and situational awareness. Radio Frequency (RF) communication plays a crucial role in ensuring that UAVs can operate effectively in a variety of environments, with high reliability and low latency. Learn more about DRONE-UAV RF COMMUNICATION.

This article delves into the significance of RF communication in Drone-UAV operations, the challenges it presents, the technologies involved, and how future advancements are shaping the communication systems for UAVs.

The Role of RF Communication in Drone-UAV Operations

RF communication is the medium through which most drones communicate with ground control stations (GCS), onboard systems, and other UAVs in a network. It enables the transmission of various types of data, including:

Control Signals: These are essential for operating the UAV, including commands for takeoff, landing, navigation, and flight adjustments.

Telemetry Data: Real-time data on the UAV’s performance, including altitude, speed, battery level, and sensor readings.

Video and Sensor Data: Drones equipped with cameras or other sensors (such as thermal, LiDAR, or multispectral) require high-bandwidth RF communication to send video feeds or sensor data back to the ground station.

Learn more about Optical Delay Line Solutions.

Payload Data: UAVs used for specific tasks like delivery or surveillance may need to transmit payload-related data, such as GPS coordinates, images, or diagnostic information.

Given the variety of data types and the need for real-time communication, a robust and reliable RF communication system is essential for the successful operation of drones in both civilian and military applications.

RF Communication Technologies for Drone-UAVs

The communication requirements of drones are diverse, necessitating different RF communication technologies and frequency bands. These technologies are designed to address challenges such as range, interference, data rate, and power consumption.

1. Frequency Bands

The RF spectrum is divided into several frequency bands, and each is used for different types of communication in UAV systems. The most commonly used frequency bands for drone communications are:

2.4 GHz: This band is one of the most popular for consumer-grade drones. It offers a good balance of range and data transfer speed, although it is prone to interference from other wireless devices (such as Wi-Fi routers and Bluetooth devices).

5.8 GHz: This band is often used for high-definition video transmission in drones, as it offers higher data rates than 2.4 GHz, but with a slightly shorter range. It’s less crowded than 2.4 GHz and typically experiences less interference.

Sub-1 GHz (e.g., 900 MHz): This frequency is used for long-range communications, as lower frequencies tend to travel farther and penetrate obstacles more effectively. It’s ideal for military drones or those used in remote areas.

L, S, and C Bands: These bands are used in military and commercial UAVs for long-range communication, often for surveillance, reconnaissance, and tactical operations. These frequencies have lower susceptibility to interference and are better suited for higher-power transmissions.

2. Modulation Techniques

The RF communication system in drones uses different modulation techniques to efficiently transmit data. Modulation refers to the method of encoding information onto a carrier wave for transmission. Some common modulation techniques used in UAV RF communication include:

Frequency Modulation (FM): Often used in control signals, FM is simple and efficient, providing clear communication with minimal interference.

Amplitude Modulation (AM): Used for video and lower-bandwidth applications, AM transmits a signal whose amplitude is varied to carry the information.

Phase Shift Keying (PSK) and Quadrature Amplitude Modulation (QAM): These more advanced techniques allow for high data transfer rates, making them ideal for transmitting high-definition video or large sensor datasets.

3. Signal Encoding and Error Correction

To ensure that RF communication remains stable and reliable, especially in noisy or crowded environments, drones use advanced signal encoding and error correction methods. These techniques help to mitigate the impact of signal interference, fading, and packet loss. Common methods include:

Forward Error Correction (FEC): This involves adding redundant data to the so that errors can be detected and corrected at the receiver end.

Diversity Reception: Drones may employ multiple antennas or receivers, allowing them to receive signals from different directions and improve the overall reliability of communication.

Spread Spectrum Techniques: Methods like Frequency Hopping Spread Spectrum (FHSS) or Direct Sequence Spread Spectrum (DSSS) spread the signal over a wider bandwidth, making it more resistant to jamming and interference.

4. Long-Range Communication

For long-range missions, RF communication technology needs to go beyond traditional line-of-sight communication. To achieve this, drones can leverage various technologies:

Satellite Communication (SATCOM): When beyond-visual-line-of-sight (BVLOS) operations are required, drones can use satellite links (via L, S, or Ku-band frequencies) to maintain constant communication with the ground station.

Cellular Networks: 4G LTE and 5G networks are increasingly being used for drone communication, especially in urban environments. 5G, in particular, offers ultra-low latency, high-speed data transfer, and extensive coverage.

Mesh Networking: Some UAVs can form mesh networks where each drone communicates with others in the fleet, extending the range of the communication system and providing redundancy.

Challenges in Drone-UAV RF Communication

While RF communication is essential for UAVs, it presents several challenges that need to be addressed to ensure the reliable and secure operation of drones.

1. Interference and Jamming

One of the biggest threats to RF communication in drones is interference from other electronic systems or intentional jamming. Drones, especially in crowded or military environments, must be capable of avoiding interference from various sources, such as:

Other drones operating on the same frequencies.

Wireless communication systems like Wi-Fi or Bluetooth.

Intentional jamming by adversaries in conflict zones or hostile environments.

To mitigate these issues, drones use frequency hopping, spread spectrum techniques, and advanced error-correction algorithms to make communication more resilient.

2. Limited Range and Power Constraints

The effective range of RF communication in drones is limited by factors such as transmitter power, antenna design, and frequency band characteristics. While UAVs with longer ranges can use lower frequencies like 900 MHz or satellite links, they are often limited by battery life and payload capacity.

The trade-off between range and power consumption is an ongoing challenge. Drones must find a balance between maintaining communication and extending their operational flight times.

3. Security Risks

The RF communication channel is vulnerable to security threats, such as signal interception, spoofing, and hacking. Unauthorized access to the communication link could compromise the integrity of the UAV’s operations or allow malicious actors to take control of the drone.

To secure drone communications, encryption methods like AES (Advanced Encryption Standard) and TLS (Transport Layer Security) are employed, ensuring that only authorized parties can decrypt and interpret the transmitted data.

4. Latency and Data Throughput

For applications that require real-time control and feedback, such as autonomous drones or those used in first-responder scenarios, low-latency communication is crucial. High latency could delay mission-critical decisions, especially in dynamic environments like search and rescue operations or military engagements. Additionally, high-data-throughput applications like video streaming require RF systems with robust bandwidth management.

Future Trends in Drone-UAV RF Communication

As UAV technology continues to advance, so will the communication systems that power them. Key trends in the future of drone RF communication include:

5G and Beyond: The rollout of 5G networks is expected to revolutionize drone communications with ultra-low latency, high bandwidth, and greater network density. This will enable more drones to operate simultaneously in urban environments, enhance remote operation, and facilitate advanced applications such as drone swarming and real-time video streaming.

Artificial Intelligence (AI) for Dynamic Communication: AI-powered algorithms can optimize communication links based on environmental conditions, such as avoiding interference, adjusting frequencies, and ensuring maximum data throughput. AI will also play a role in improving autonomous decision-making for UAVs in communication-heavy operations.

Integration with IoT: Drones are increasingly integrated into the Internet of Things (IoT) ecosystem. As a result, drones will not only communicate with ground control but also with other devices and systems in real-time. This opens new possibilities for industrial applications like smart farming, precision delivery, and environmental monitoring.

RF communication is at the heart of every drone’s operation, whether for military, industrial, or commercial use. As UAV technology continues to evolve, so too must the communication systems that support them. RF communication technologies are enabling drones to perform increasingly complex tasks, from surveillance and reconnaissance to logistics and environmental monitoring.

Despite the challenges posed by interference, range limitations, and security risks, advances in RF technology, coupled with innovations like 5G and AI, promise to take UAV communication systems to new heights—fostering more reliable, secure, and efficient operations across a range of industries.

-

Business Solutions2 years ago

Business Solutions2 years agoLive Video Broadcasting with Bonded Transmission Technology

-

Business Solutions11 months ago

Business Solutions11 months agoThe Future of Healthcare SMS and RCS Messaging

-

Business Solutions2 years ago

Business Solutions2 years ago2-Way Texting Solutions from Company Message Services

-

Business Solutions2 years ago

Business Solutions2 years agoCommunication with Analog to Fiber Converters & RF Link Budgets

-

DSRC Communication1 year ago

DSRC Communication1 year agoThe Crossroads of Connectivity: DSRC vs. C-V2X Technologies in Automotive Communication

-

Electronics2 years ago

AI Modules and Smart Home Chips: Future of Home Automation

-

Tech3 years ago

Tech3 years agoThe Symphony of Connectivity: Understanding Ethernet Devices

-

Business Solutions2 years ago

Business Solutions2 years agoWholesale SMS Platforms with OTP Services